This post was originally published on this site.

Everyone’s talking about “AI agents” now. But what’s the real difference between generative AI and agentic AI? And which is the best tool for saving you precious time across all your marketing workflows?

A marketer opens ChatGPT, types a prompt, and gets a (probably pretty bad) blog post draft back in 30 seconds. That’s generative AI. Their colleague opens Agent-A, gives it a target keyword, and walks away. Twenty minutes later, they have a full SEO research report, without touching the keyboard again: keyword data pulled, SERPs analyzed, content gaps identified, recommendations written. That’s agentic AI.

In both cases, you’re using the same underlying technology, but the results (and effort required to reach them) are very different.

Generative AI creates content on demand, but agentic AI takes action autonomously. And if you’re a marketer deciding which tools to adopt, which workflows to automate, or how much human oversight to keep, you need to understand the difference.

Try Agent A: the new marketing agent from Ahrefs

We’ve just released Agent A, an AI agent with unrestricted access to Ahrefs data that can actually do marketing for you.

Run keyword research, analyze your competitors, optimize your content, make technical SEO fixes, and much more—all automatically, using state-of-the-art agentic AI models and Ahrefs’ world-class data.

In this article, I’ll explain the difference between generative and agentic AI, show you what each looks like in practice, and help you figure out where each one fits in your day-to-day work.

Generative AI produces new content—text, images, video, code—from a prompt. The key word is generates: the model doesn’t fetch a pre-written answer from a database somewhere. It creates something new each time, predicting the most statistically useful next token (words, phrases, numbers, and so on) based on patterns learned from massive training datasets.

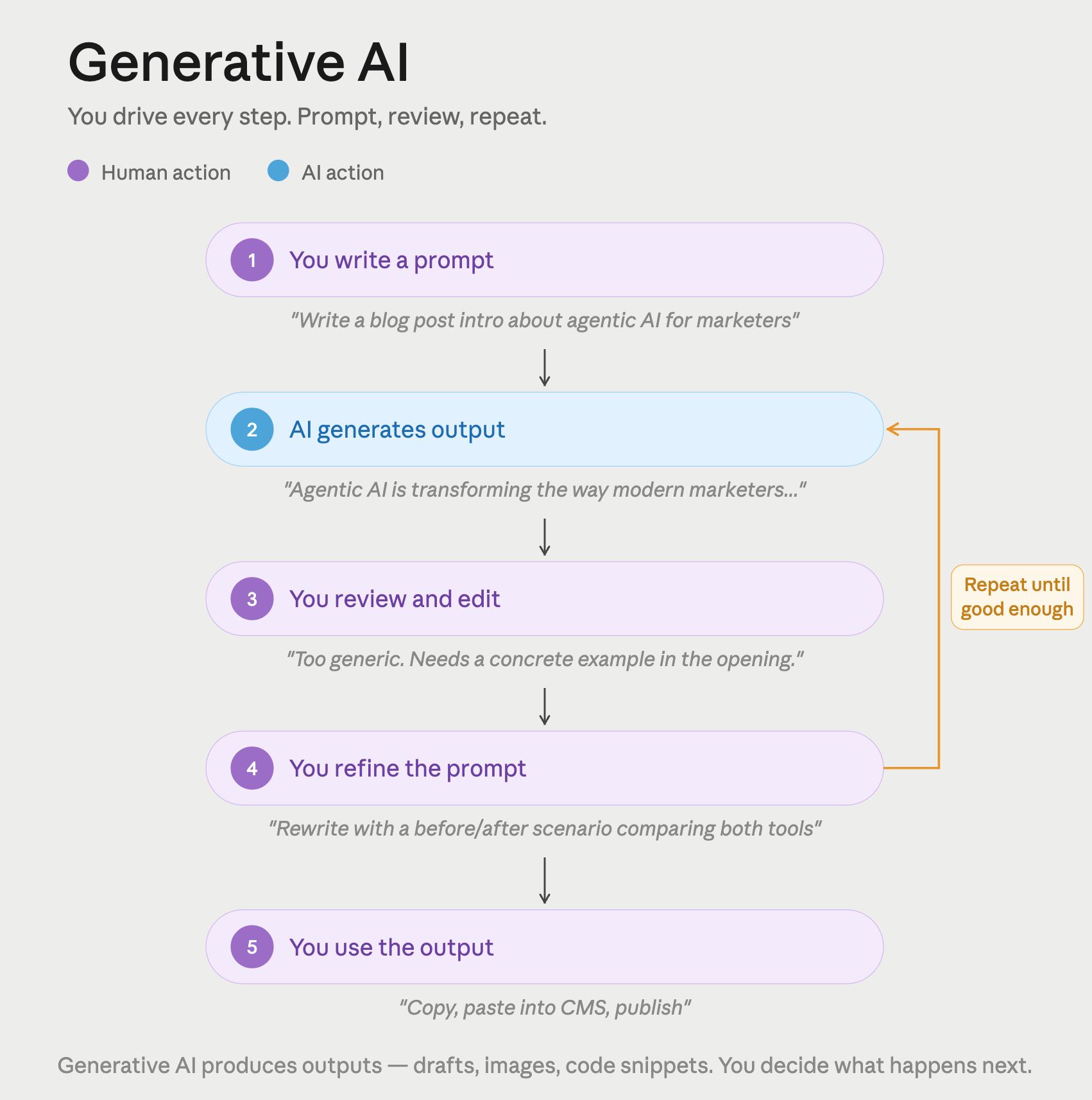

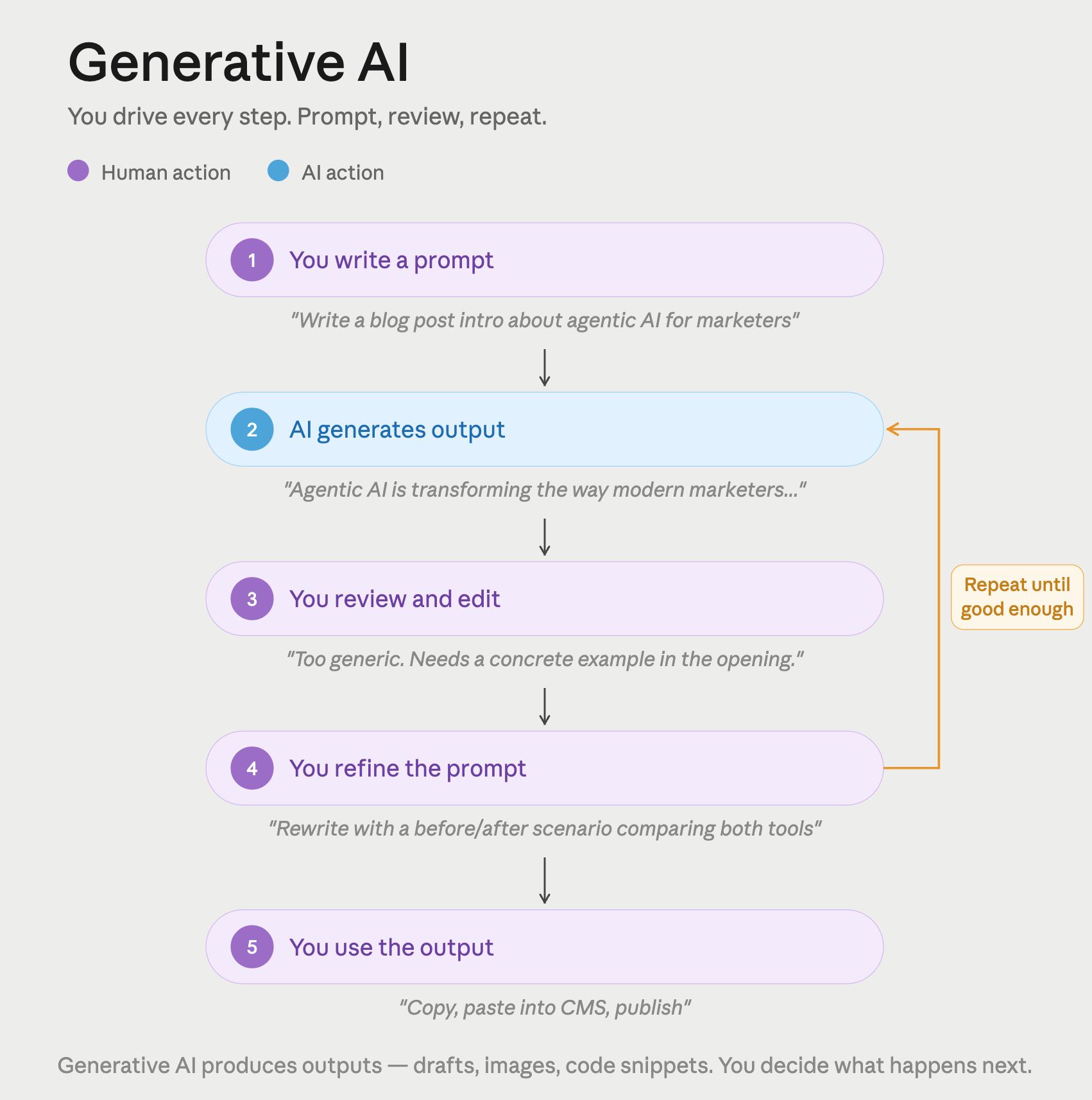

The other defining trait is that generative AI is fundamentally reactive. You prompt it, it responds, and then it stops. Every output requires a human to trigger the next step—reviewing the result, deciding what to do with it, and prompting again if needed. Some tools chain prompts automatically (more on that in a moment), which blurs this line slightly, but the underlying model is still waiting for instructions at each handoff.

Think of it like a very capable colleague who will answer any question you put to them, but never acts on their own initiative.

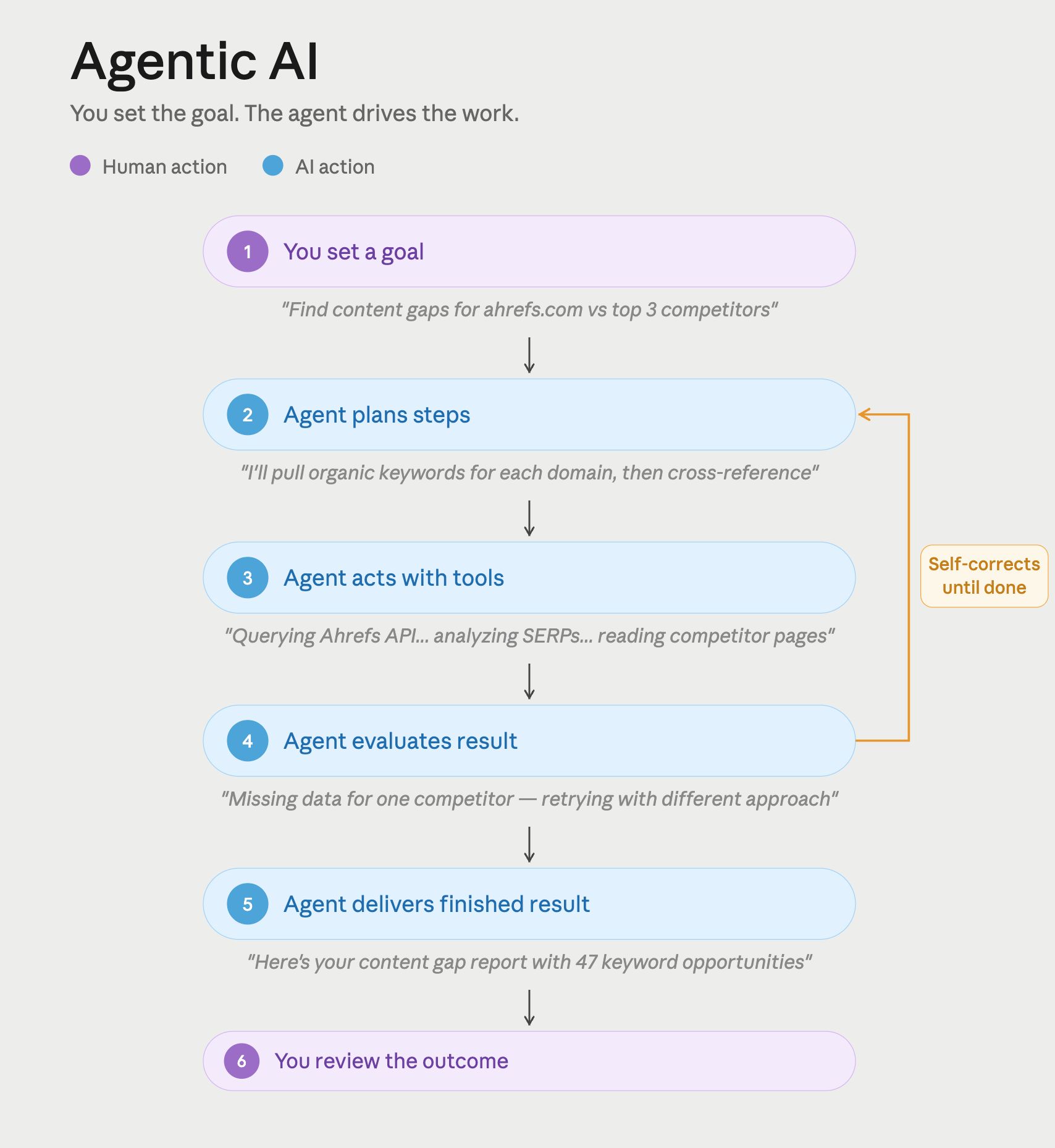

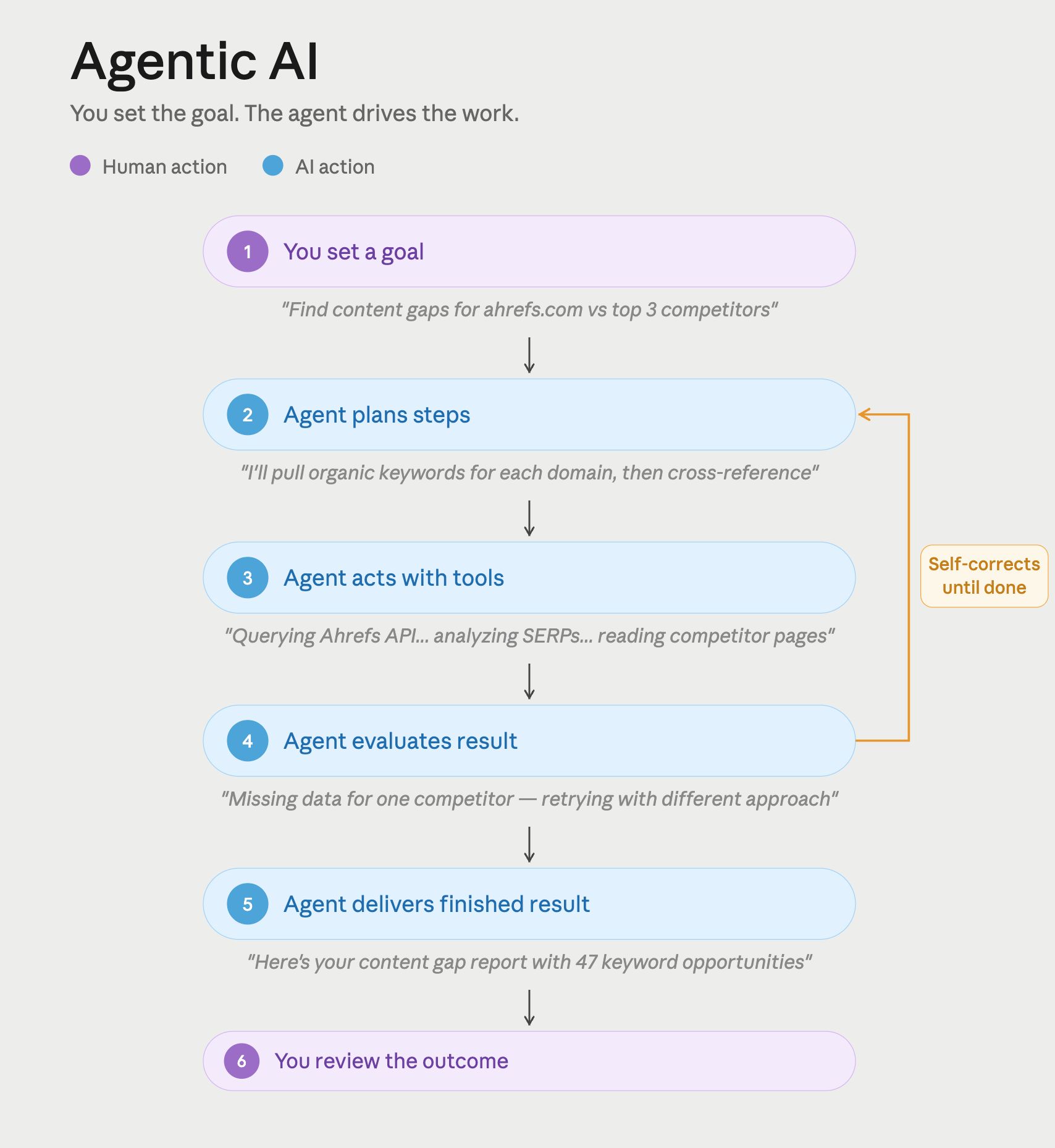

Agentic AI pursues a goal across multiple steps without waiting for human input at each one. Given an objective, it plans, executes, checks results, and iterates, all on its own.

Agentic AI works like a continuous loop: observe → reason → act → observe again. Agentic systems can do more than just answer questions (like a chatbot); they can use tools, search engines, APIs, code execution environments, and file systems, to take real-world actions. Memory and context carry across the entire task, not just a single exchange.

If you ask a generative AI tool to “research our top competitors and draft a summary report,” it’ll give you a decent attempt based on its training data. If you give the same instruction to an agentic AI system, it can also search the web, read competitor pages, use tools like the Ahrefs MCP, synthesizes findings, and writes the report—unprompted, start to finish.

Most marketers are already deep into generative AI, even if they don’t always call it that.

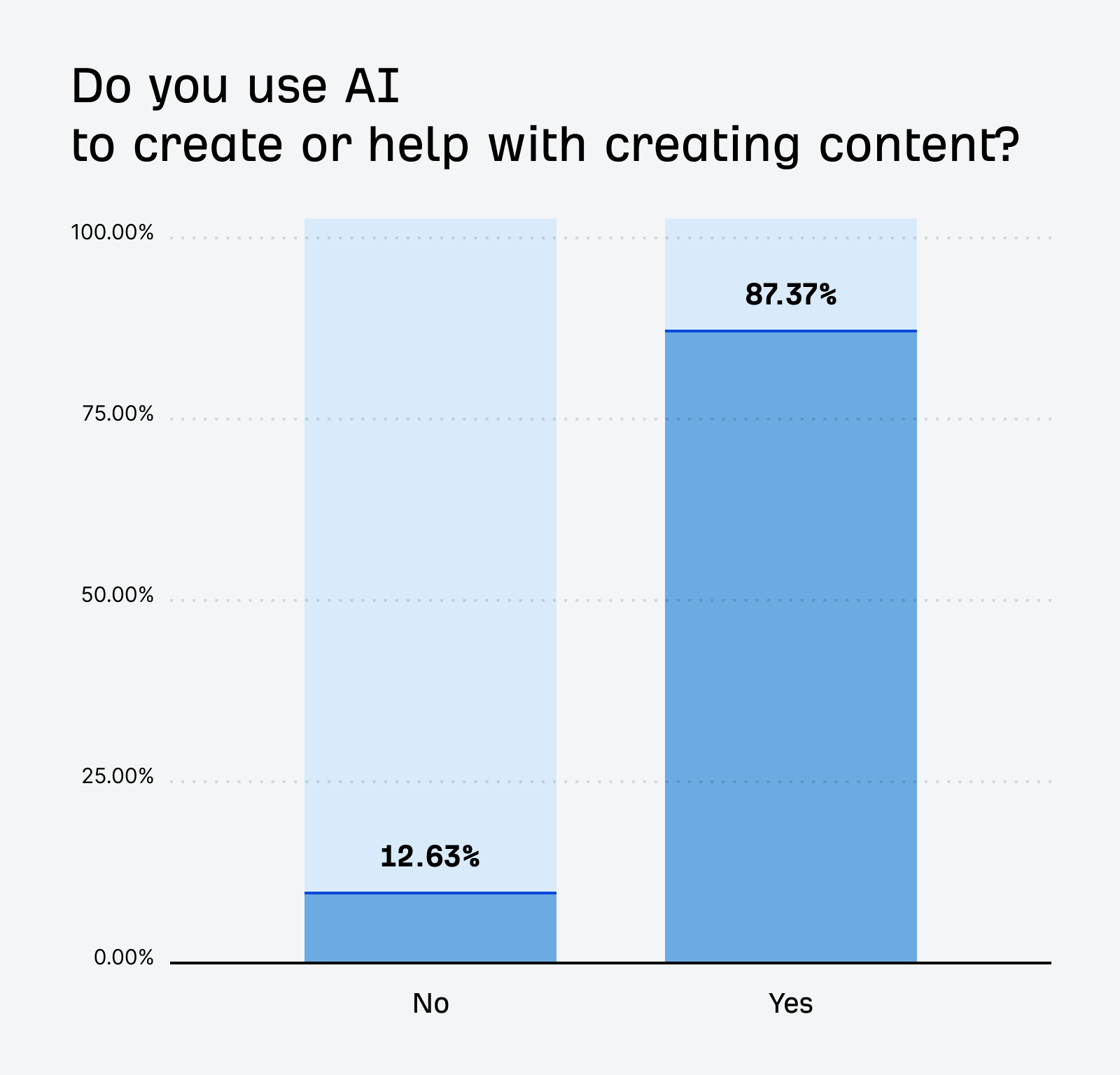

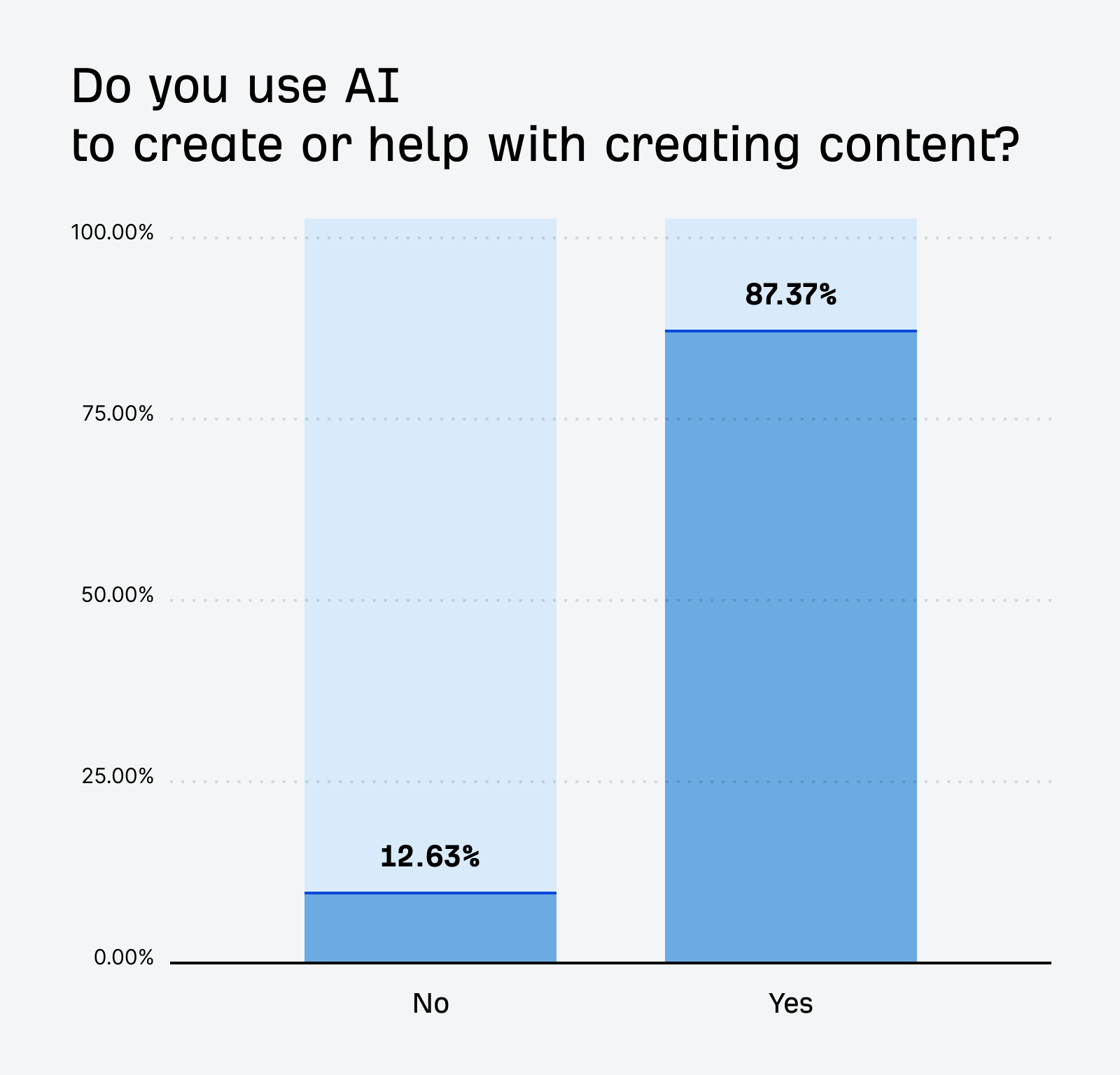

Text generation is the most mature category. ChatGPT, Claude, and Gemini are the dominant tools, used for drafting, editing, ideation, summarizing research, and rewriting content at scale. According to Wharton’s 2025 AI Adoption Report, 82% of enterprises use generative AI at least weekly, and 46% use it daily. Those numbers have climbed 10 and 17 percentage points, respectively, in a single year. And when we surveyed almost 900 marketers, 87% reported using generative AI to help create written content.

Image generation has become a staple for social, design and advertising teams. Nano Banana (aka Gemini’s image models), GPT Image 2, and Adobe Firefly are powerful go-tos for ad creatives, social images, and concept visuals. (And personally I still have a soft spot for the aesthetic style of Midjourney).

Video generation is the fastest-moving frontier. Tools like Sora, Runway, and HeyGen produce product demos, social video, and spokesperson clips from a text prompt or a reference image. HeyGen in particular has seen rapid adoption for creating localized videos without a huge international marketing crew.

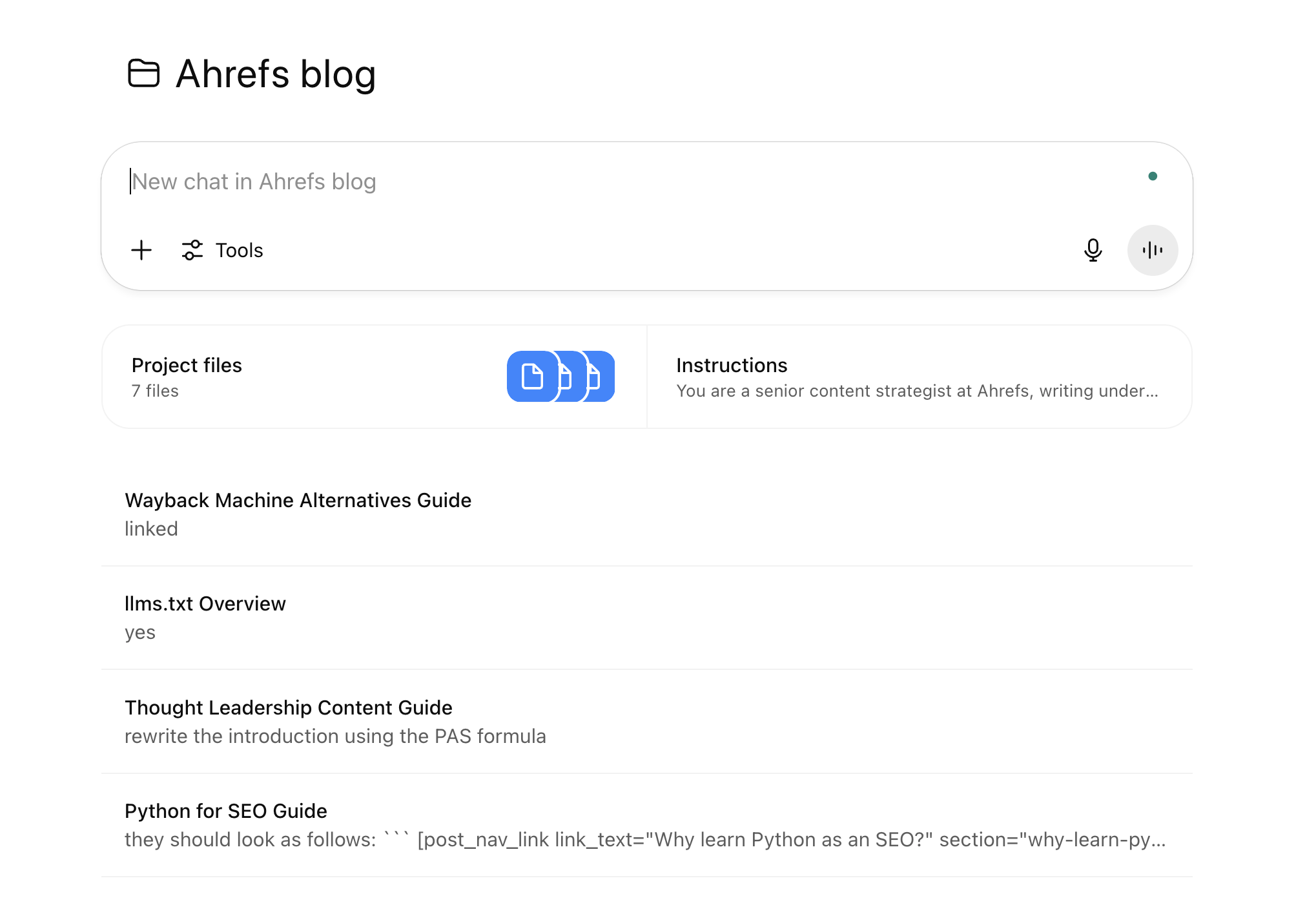

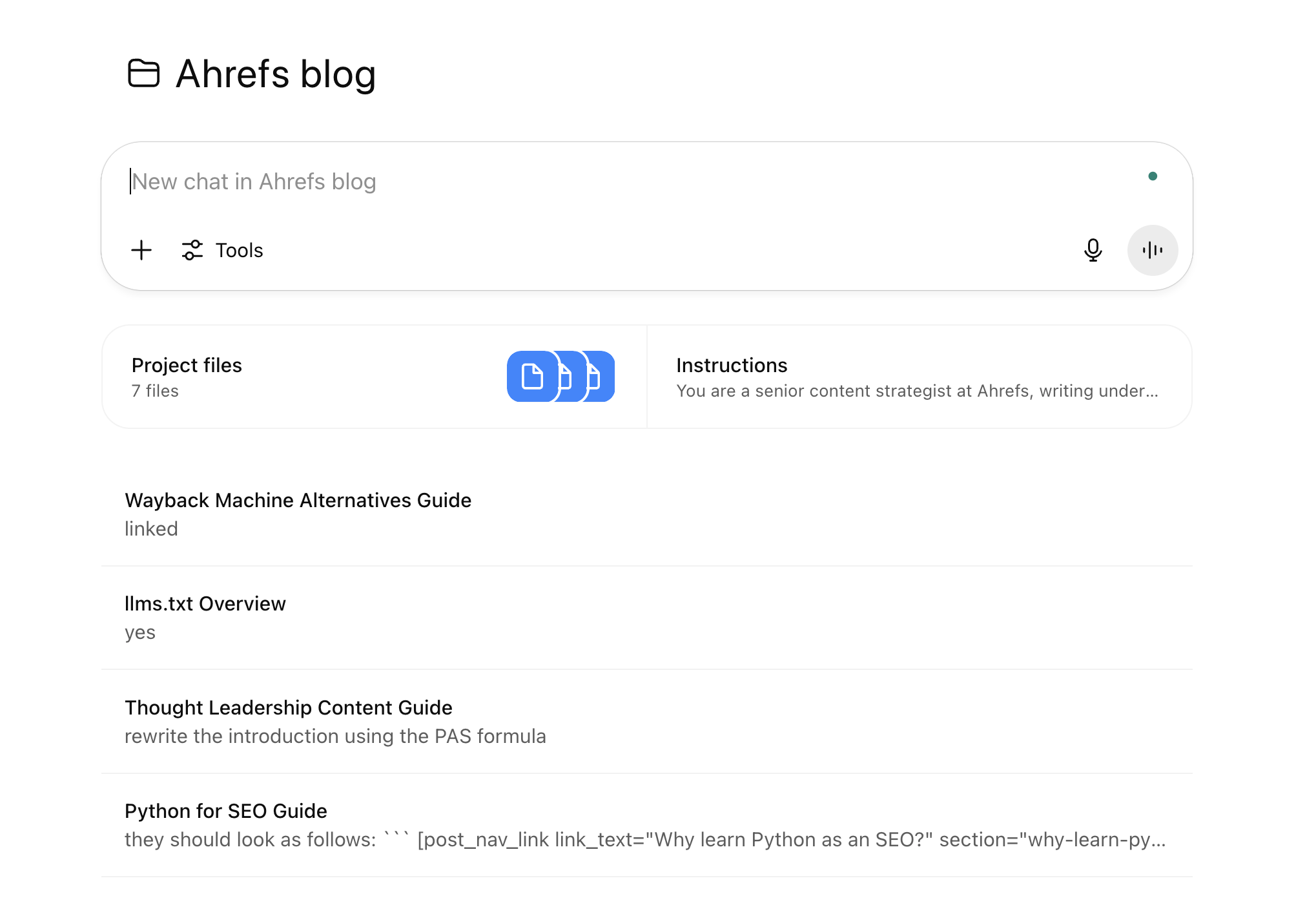

All of these tools have an important trait in common: every output requires a human to decide what happens next. The model completes its task and waits. Even “assistants” with persistent memory—like a custom GPT with context about your brand, like the ones we built for our first AI content system—don’t close the loop on tasks autonomously. They’re still reactive at their core.

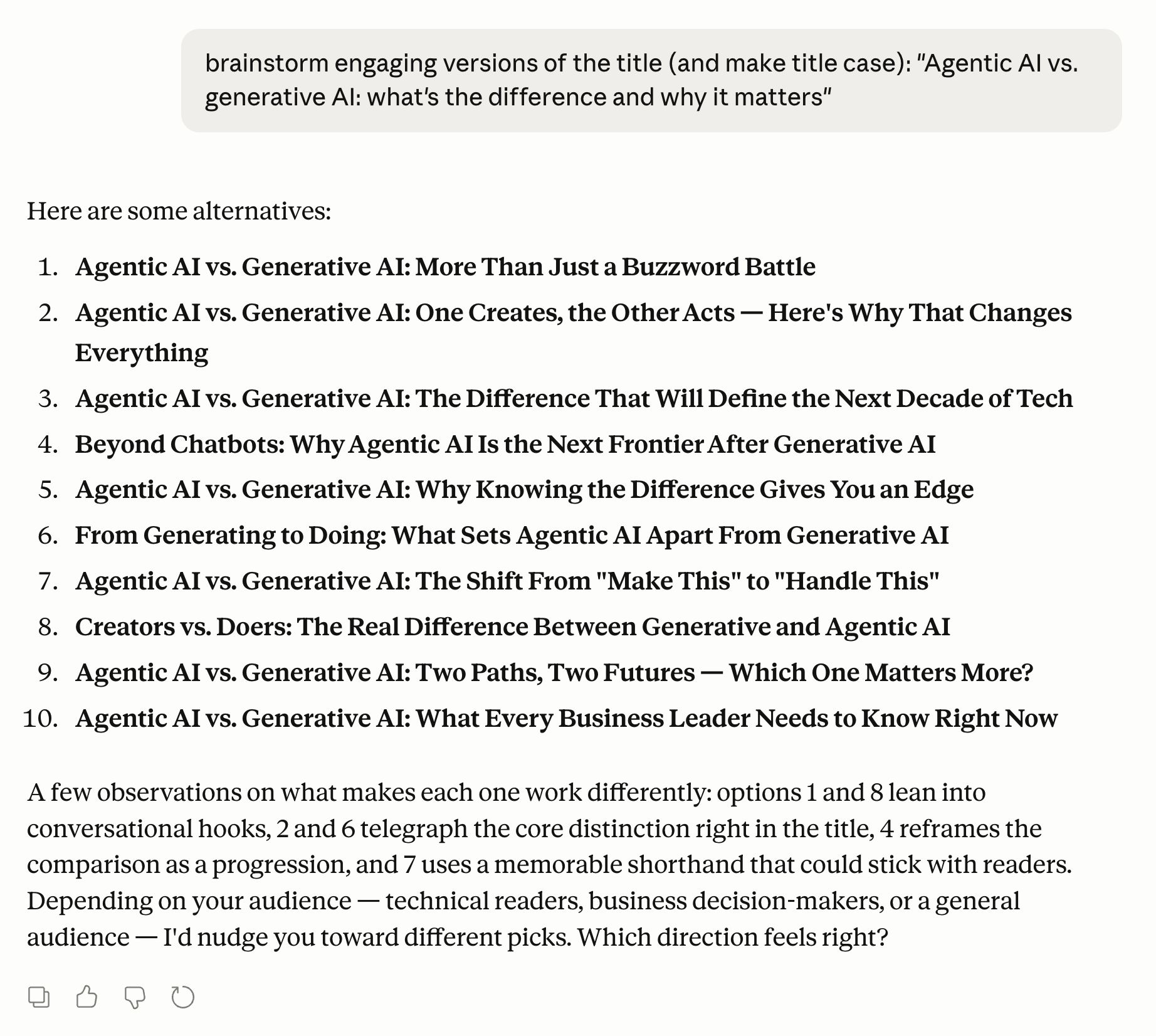

The custom GPTs we built for our AI content workflow. It worked well, but it was still extremely manual.

Agentic AI is moving fast, and the tools are more capable than most marketers realize.

Coding agents are the most mature example. Lovable turns a product description into a deployable web app with minimal back-and-forth—you describe what you want to build, and it writes, tests, and iterates until it works. Cursor brings the same agentic loop to an IDE (a code editor). Claude Code from Anthropic goes further: it reads an existing codebase, identifies what needs fixing, writes the changes, runs the tests, and iterates on failures without being asked at each step. Complex tools and workflows can be built autonomously, without tons of back-and-forth.

I built this screenshot tool for creating Ahrefs blog post images in Loveable.

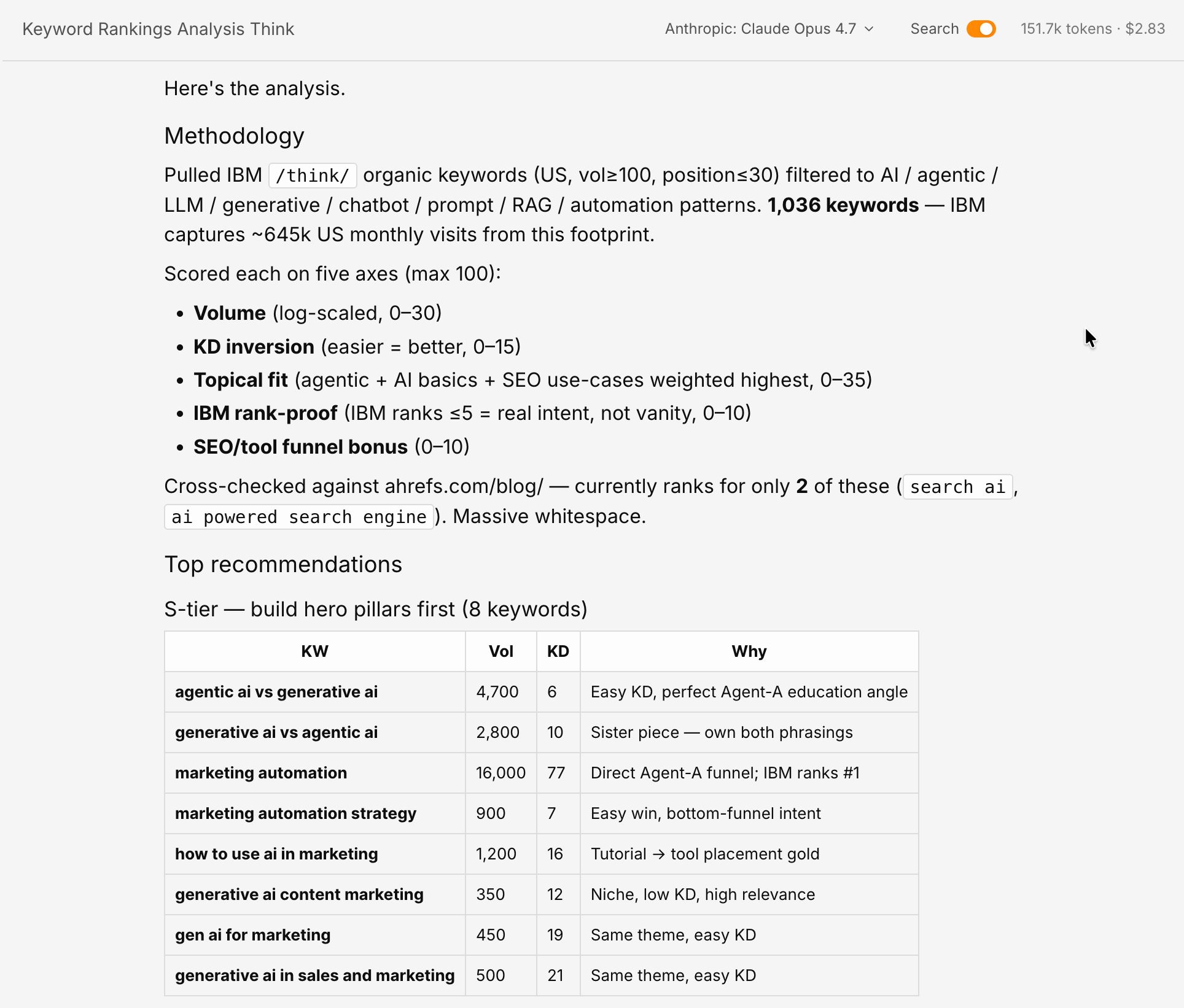

Marketing agents are the version most relevant to marketers. Ahrefs’ Agent A is a purpose-built SEO and content assistant that handles research and content workflows autonomously—pulling data from Ahrefs, analyzing it, and acting on it without requiring you to manually run each report. If you’ve ever spent an afternoon pulling keyword data, cross-referencing competitor pages, and organizing it into a brief, Agent A is built for exactly that job.

The actual Agent A chat that surfaced the keyword this blog post is targeting (meta!).

Multi-agent frameworks like AutoGPT and LangGraph chain specialized agents together to handle complex, multi-stage pipelines. You don’t need to know the technical details, but it’s worth understanding the concept: instead of one AI doing everything, these frameworks assign different parts of a task to different specialists. One agent handles research, another writes the copy, a third checks it for errors. The same division-of-labor logic that makes human teams effective applies to AI teams too.

These tools all work in the same fundamental way: you set a goal, the agent handles the execution, and you review the output rather than managing every step.

Importantly, agentic AI isn’t a separate technology from generative AI. It’s generative AI with extra infrastructure wrapped around it. The large language model at the center—GPT, Claude, Gemini—is the same whether you’re using it in a chatbot or an autonomous agent. What makes a system agentic is the extra scaffolding that lets it plan, use tools, remember what it’s done, and decide what to do next.

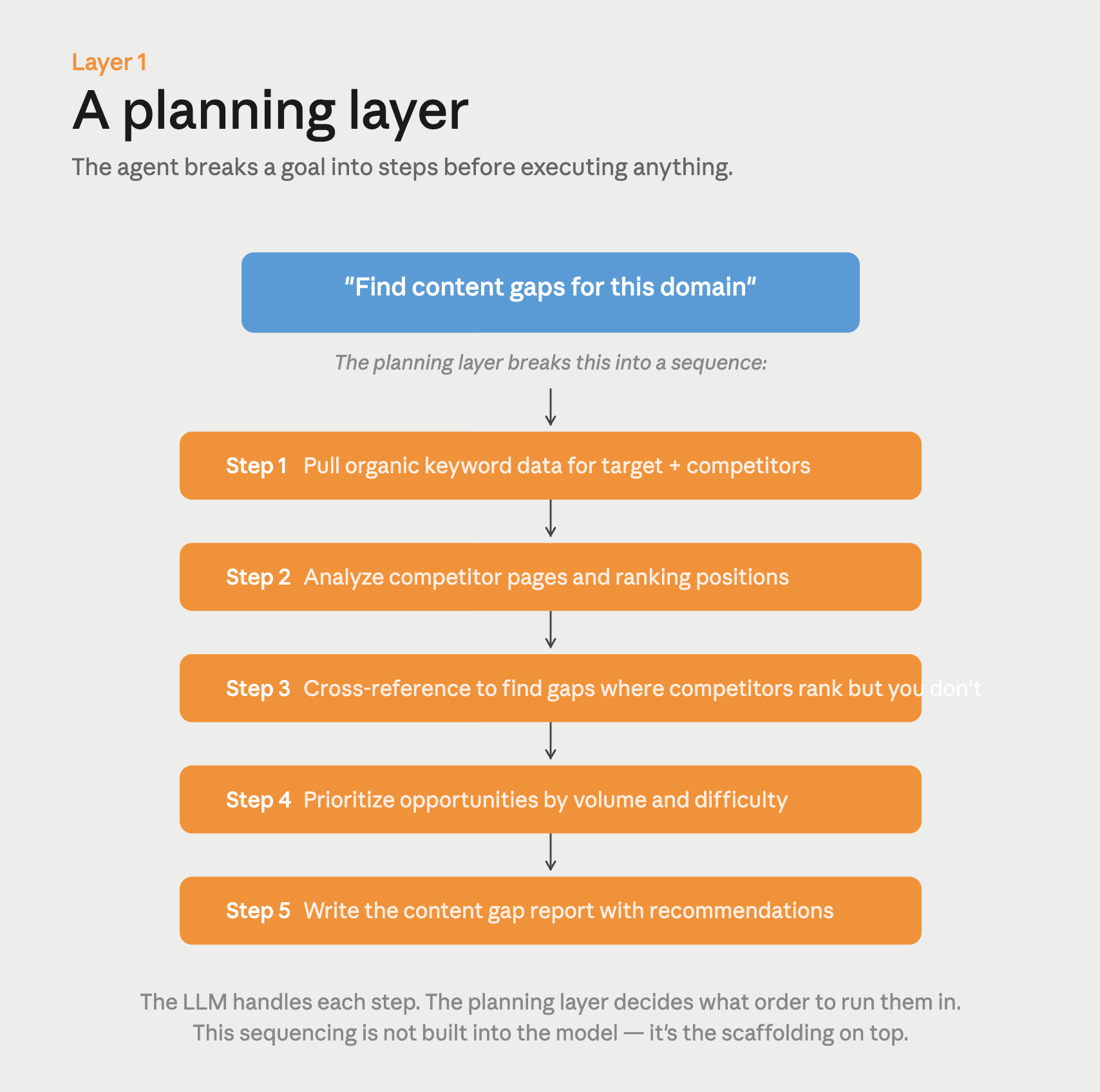

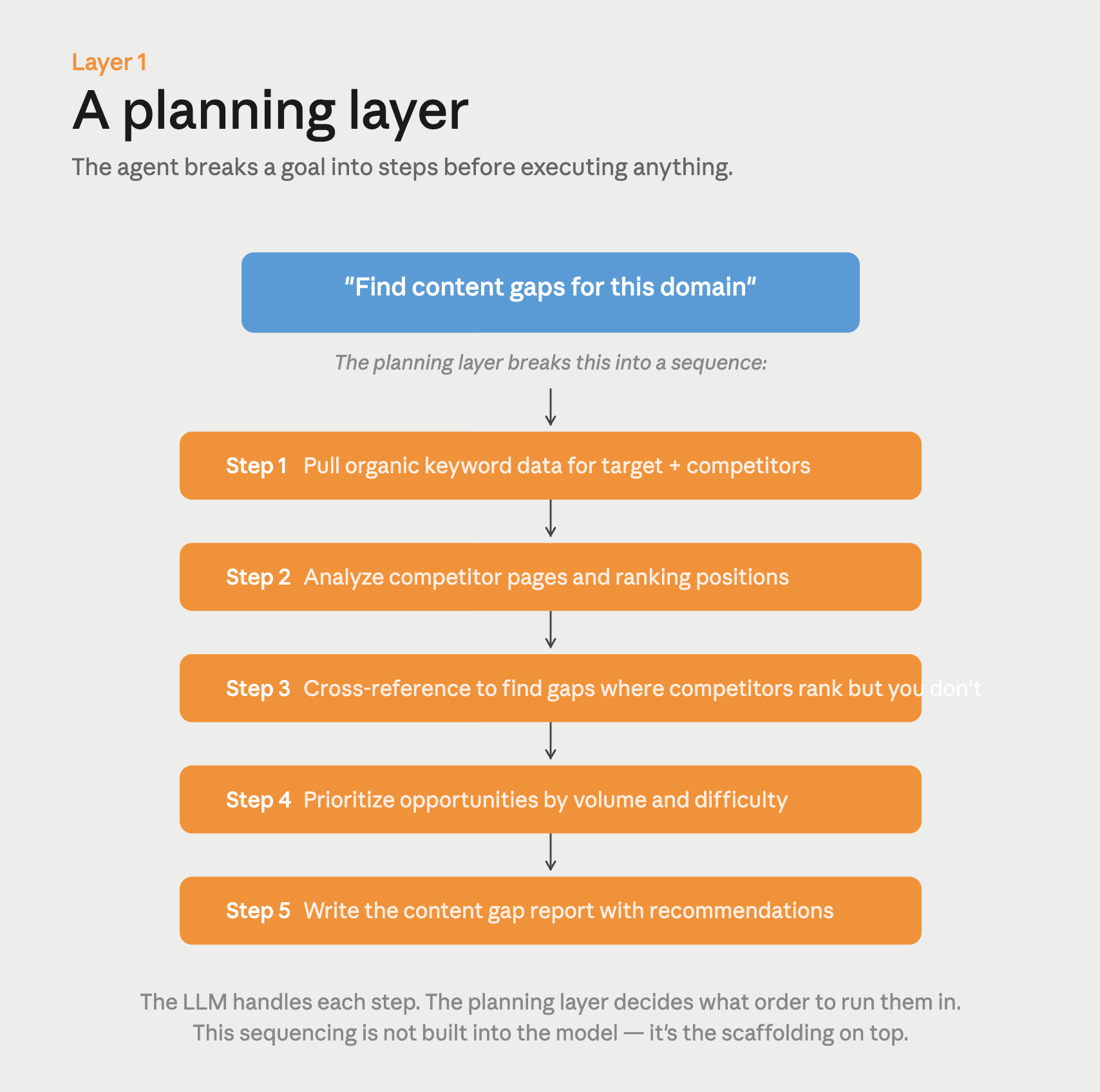

There are four layers that turn a generative model into an agentic system:

1. A planning layer

A generative model responds to one prompt at a time. An agentic system takes a goal and breaks it into steps before executing anything.

When you tell Agent A to “find content gaps for this domain,” it doesn’t answer right away: it decides to pull organic keyword data first, then analyze competitor pages, then cross-reference the results. That sequencing isn’t built into the language model itself. It’s handled by a planning loop that sits on top of it, prompting the model repeatedly and using each output to decide what comes next.

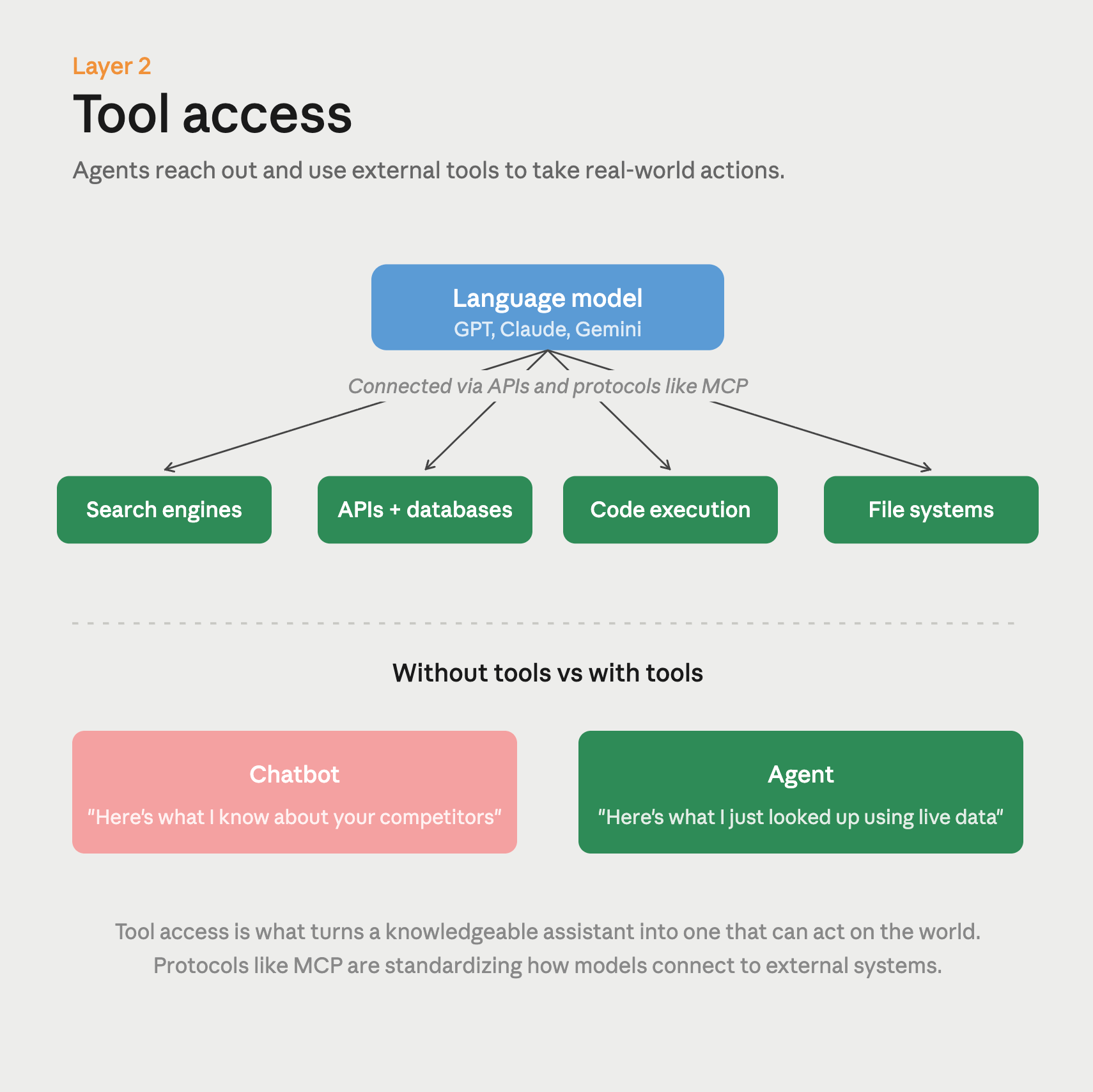

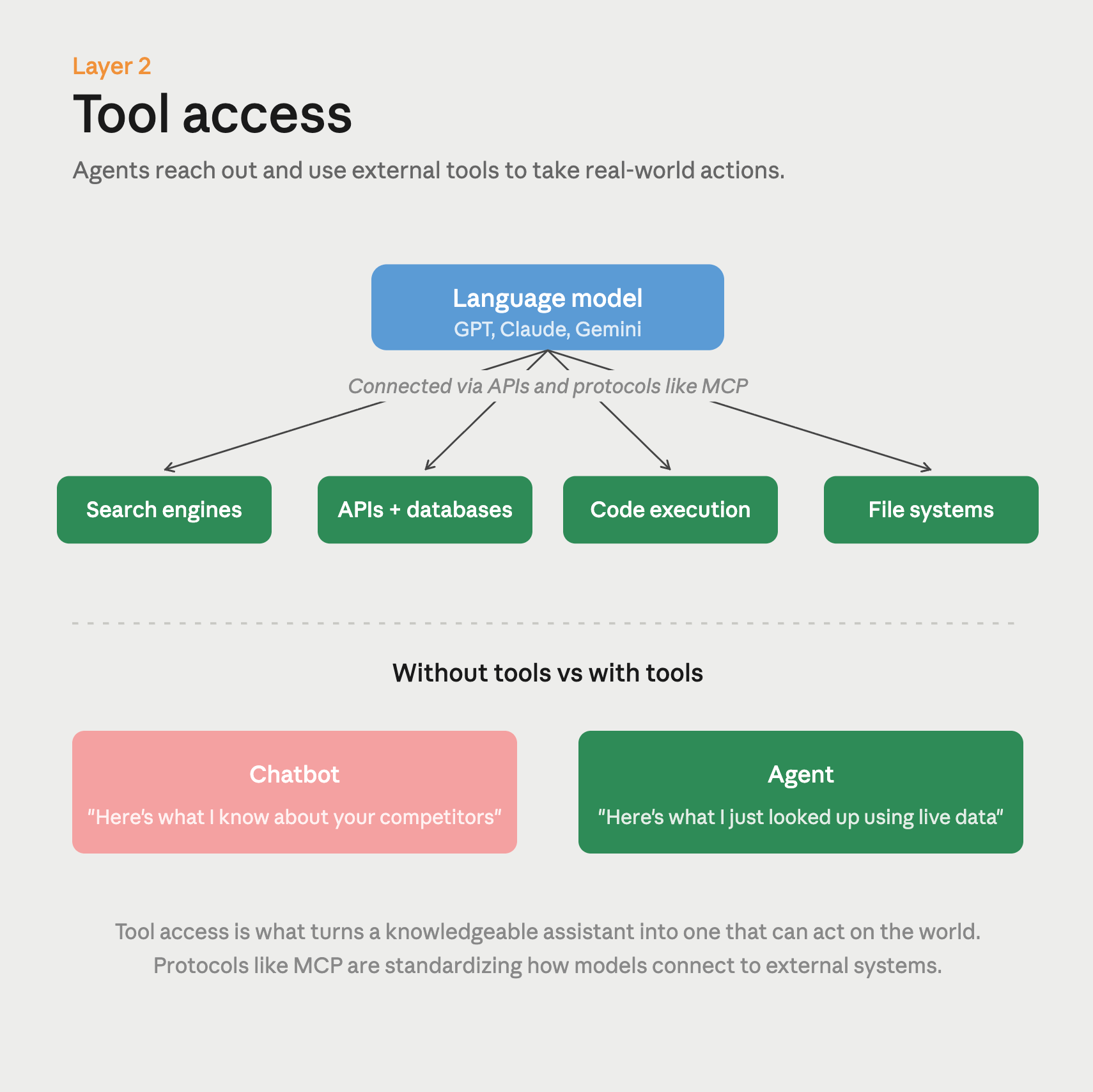

2. Tool access

A chatbot can only work with what’s in its training data and whatever you paste into the prompt. An agent can reach out and use external tools—search engines, APIs, databases, code execution environments, file systems.

This is how an agentic system goes from “here’s what I know about your competitors” to “here’s what I just looked up about your competitors using live data.” Protocols like Anthropic’s Model Context Protocol (MCP) are standardizing how models connect to external tools, which is making it much easier to give agents access to the systems they need. (You can use Ahrefs’ official MCP in Claude and ChatGPT—learn more here.)

Further reading

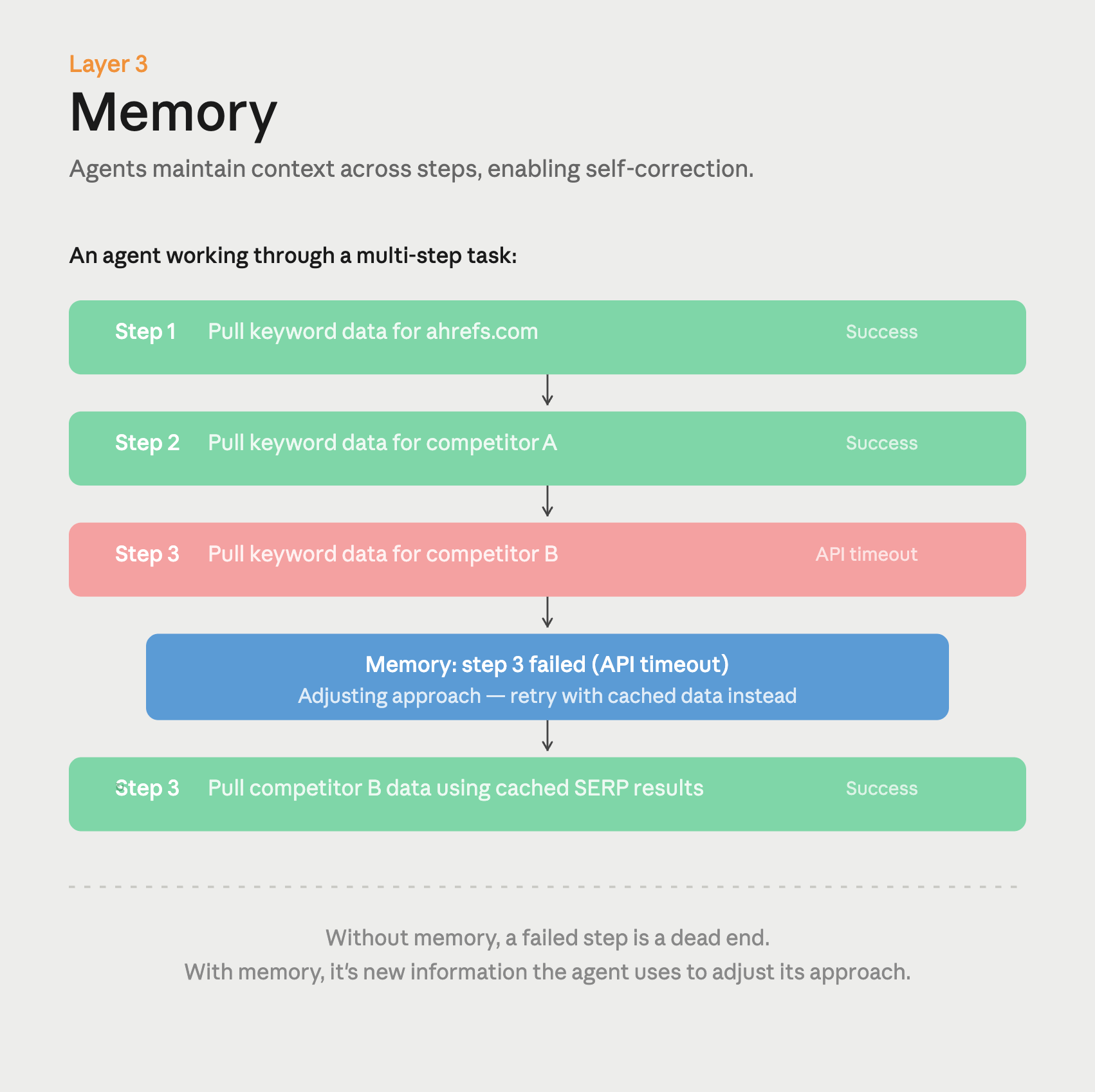

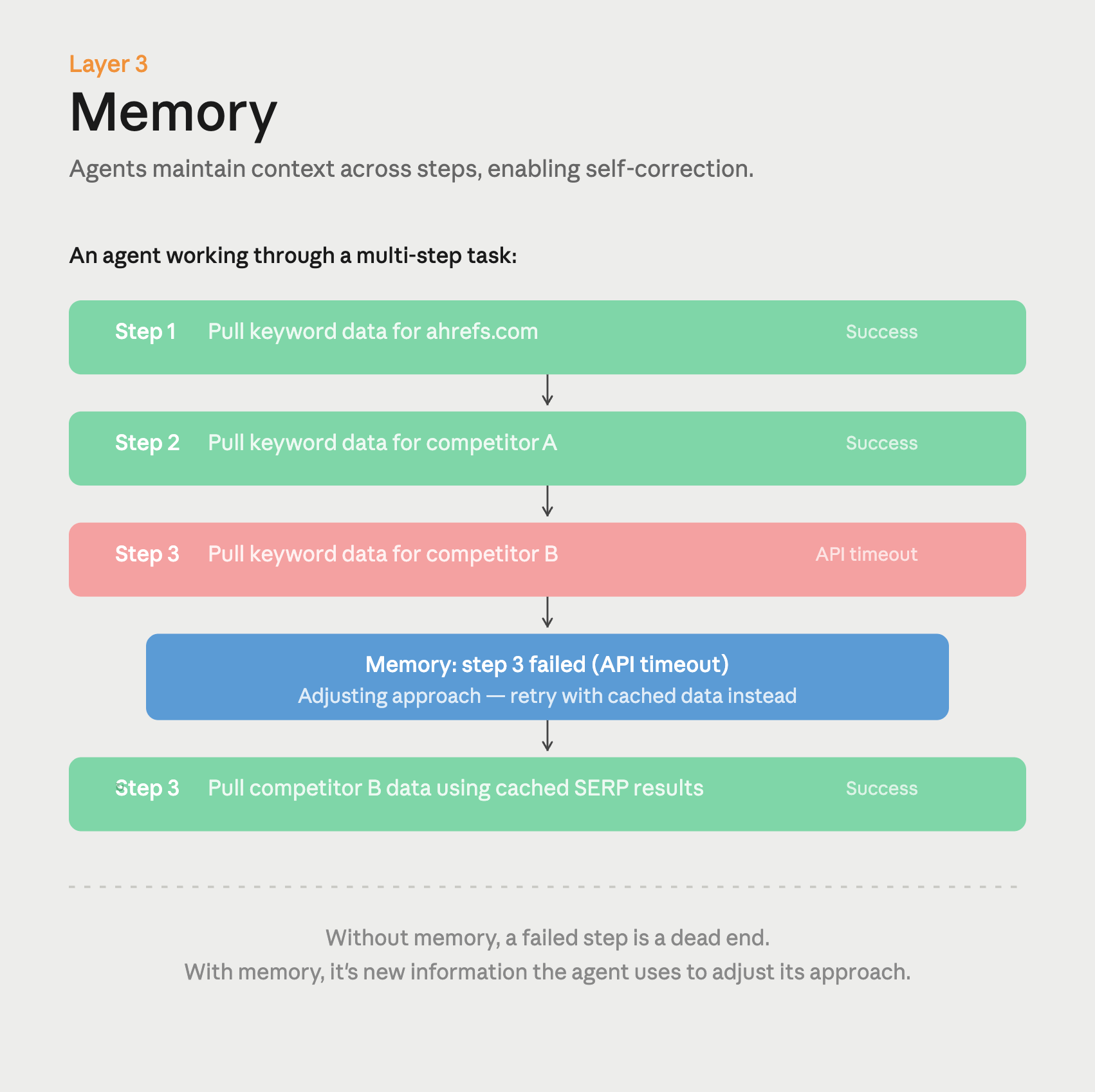

3. Memory

In a standard ChatGPT conversation, the model has no memory of what happened in previous sessions (unless you’ve turned on the memory feature, which is limited). An agentic system maintains context across the entire task, and sometimes across tasks.

It knows that step three failed, so it needs to adjust step four. It remembers that you prefer a certain format, or that a particular data source was unreliable last time. Without this persistence, an agent can’t self-correct or learn from its own mistakes mid-task.

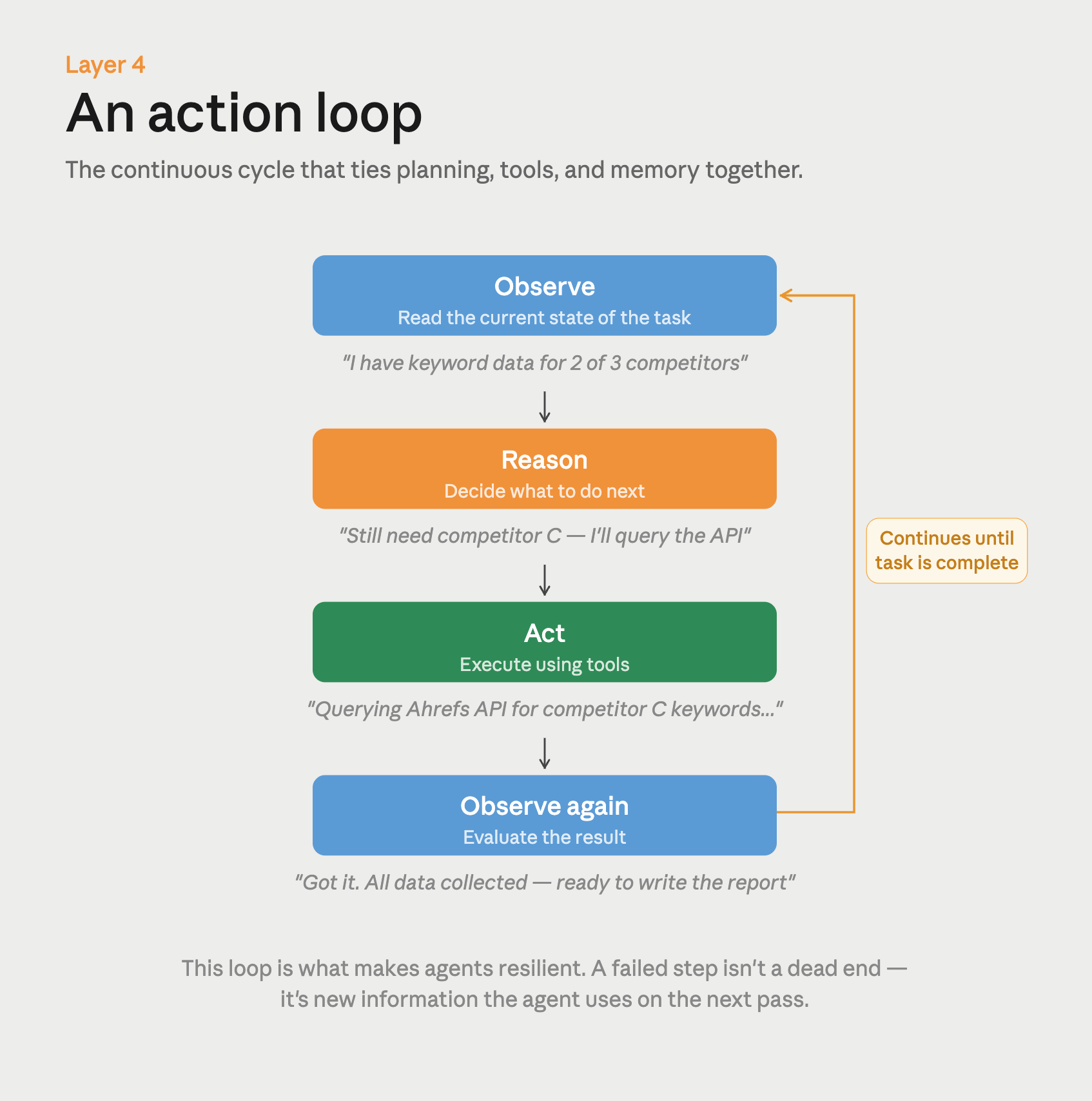

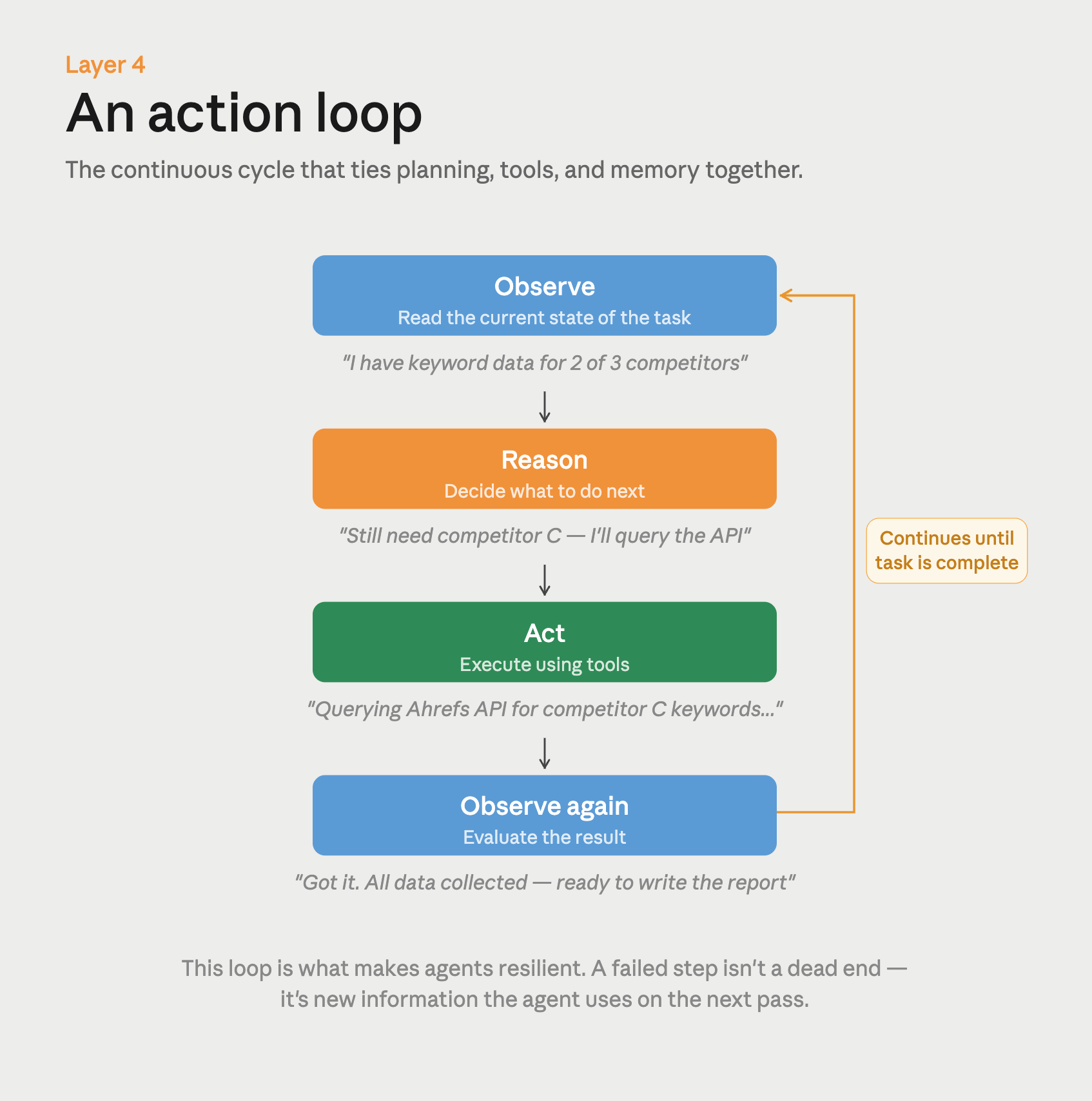

4. An action loop

This is what ties everything together. Instead of generating one response and stopping, an agentic system runs a continuous cycle: observe the current state, reason about what to do next, take an action, then observe the result. If the result isn’t right, the loop continues. This is why an agent can recover from errors that would completely stall a generative AI tool—it treats a failed step as new information, not a dead end.

When you evaluate an “agentic” tool, you’re really evaluating the quality of the scaffolding: how well it plans, which tools it can access, how much context it retains, and how gracefully it handles failures. The underlying language model matters, but it’s only one piece of the system. Two agents built on the same model can perform very differently depending on how well this “orchestration layer” is designed.

These technical differences create a few key differences between generative and agentic AI:

Autonomy

Generative AI does one thing at a time. You type a prompt, it gives you an output, and then it waits for your next instruction. An agentic system can chain those steps together on its own: researching a topic, drafting content, checking it for errors, and scheduling it to publish, all without you stepping in between each stage. Think of it as the difference between asking an intern to write one email versus handing a project manager a brief and getting back a finished campaign.

Persistence

When you close a ChatGPT conversation and open a new one, it starts fresh. That’s generative AI—each interaction is essentially independent. Agentic AI remembers what it’s doing across steps. If it hits a problem halfway through a task, it can adjust its approach instead of just stopping. That memory is what makes complex, multi-step work possible.

Risk

A generative AI tool gives you a draft that you review before anything happens. An agentic system can take real actions, like sending emails, publishing pages, making API calls, even adjusting ad spend. That’s powerful, but it also means mistakes can cascade if you haven’t set up the right guardrails. This is why most enterprise agentic tools include human approval checkpoints before anything consequential goes live.

Speed to outcome

Tasks that currently require a human to coordinate across multiple tools and handoffs (complicated work processes like campaign builds, multi-channel reporting, or customer support resolution) can be coordinated and executed by an AI agent. Gartner projects that autonomous systems could handle 80% of customer support interactions by 2029. Cisco estimates 68% of customer service interactions with tech vendors will be handled this way by 2028.

Skill required

Getting good results from generative AI is mostly a writing skill. You learn to give clear prompts, iterate on the output, and spot when something isn’t quite right. Directing agentic AI is more like managing a team member. You need to set a clear goal, define what success looks like, and decide how much autonomy to give before you want to review the work. If you’re good at writing briefs and delegating, you’ll pick up agentic tools quickly.

I use a ton of AI in my marketing workflows, every day.

I use generative AI for point tasks—discrete, well-defined jobs where I know what I want and just need help producing it faster. When I publish an article, I’ll use ChatGPT or Claude to brainstorm social media copy to promote it: different angles, different hooks, different formats for each platform. These are simple tasks that don’t require complex owkrflows or expensive AI models, thinking for an hour, to execute well.

When I’m writing, I’ll sometimes use generative AI to produce standalone sections of content—a specific paragraph, a comparison table, a summary—that I then edit and weave into the full piece (if it’s any good).

And when I’m building a conference talk, I use image generation to create custom visuals and modify memes for my slides.

Nano Banana images featured heavily in my talk at Ahrefs Evolve.

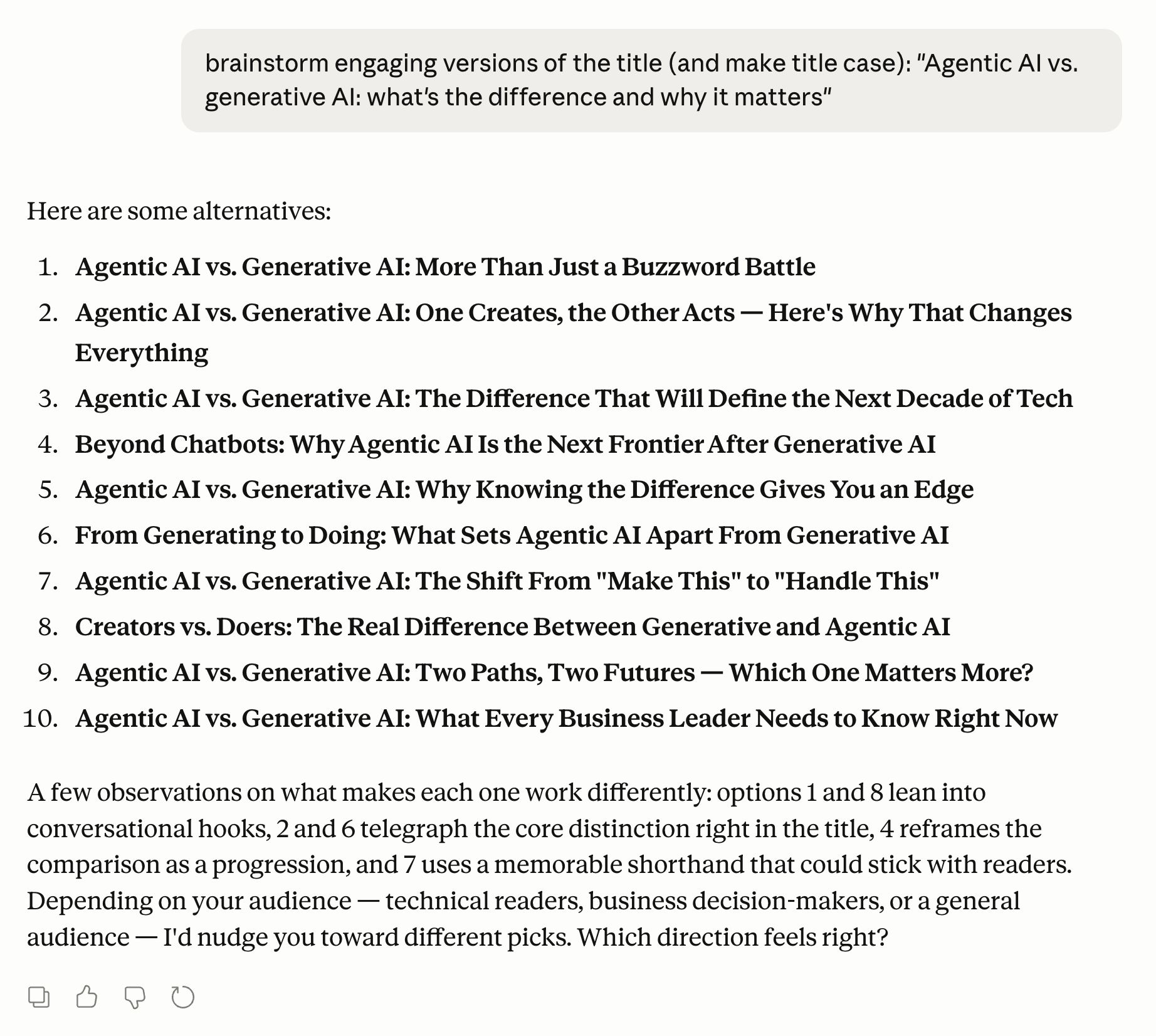

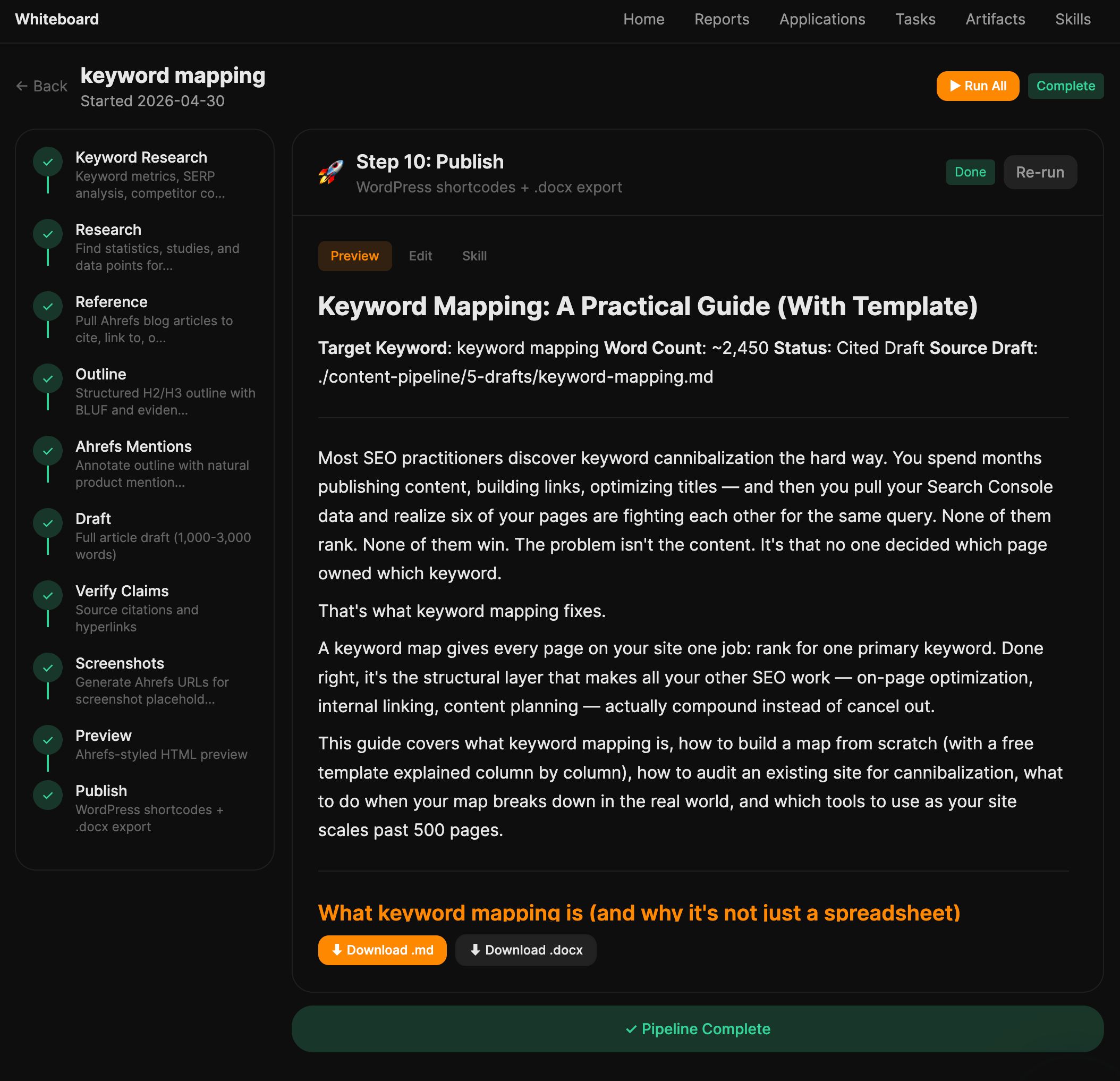

Agentic AI plays a different role entirely. I don’t use it to help me with a task, I use it to replace the task. Keyword research, content gap analysis, competitor audits: these used to be manual workflows that took me an afternoon of pulling data, cross-referencing sources, and organizing findings. Now I hand the objective to an agent and review the output.

I use Agent A to generate my monthly blog team report, complete with GSC data, keyword movements, and traffic analysis. I have a scheduled task that runs a content gap analysis for our blog, uses Ahrefs data to pull keyword data, and then triages each new opportunity according to its value to our business. I even write some of the articles on the blog using my blog workflow I built in Agent A, an application that chains together 23 skill files to update blog posts automatically. It reads the existing post, checks what’s changed, pulls fresh data, and rewrites what needs rewriting—end to end, without me managing each step.

These workflows require more complex LLM models and often cost more in token usage, but crucially, they’re still incredibly cheap when I consider the time they save me to spend on other, more crucial tasks.

That said, most marketing teams haven’t yet operationalized agentic tools beyond one-off experiments. The gap between what’s possible and what’s actually getting used day-to-day is significant. And most importantly, human oversight stays essential regardless of which type you’re using—agentic AI amplifies your decisions, including wrong ones. Keeping a human in the loop on consequential tasks is essential.

Final thoughts

If you want to see what agentic AI actually feels like in practice, Agent A is a good place to start. It’s built on 14 years of Ahrefs’ web index—170+ trillion pages, 41.9 billion keywords, 3.5 trillion backlinks—and it uses that data to run SEO and marketing workflows autonomously.

Give it a goal like “find content gaps against my top competitors” or “audit my site’s technical health,” and it handles the research, analysis, and reporting without you managing every step. It connects to your existing stack (including Google Analytics, Search Console, your CMS) so the recommendations are grounded in your actual data, not generic advice.

Further reading

![[Aggregator] Downloaded image for imported item #1400673](https://www.sme-insights.co.uk/wp-content/uploads/2026/05/agent-a-keyword-research-1068x560.jpg)