This post was originally published on this site.

Here are five time-tested writing frameworks that will help both humans and AI understand your content (alongside the research that shows they work).

Dan Petrovic wrote a great article explaining why human-friendly content is AI-friendly content. In a nutshell, there is a striking parallel between how people and AI models process text information: we both try to glean meaning from long text without spending lots of costly time and energy reviewing every word.

Or put simply: neither AI models nor people actually read content. We both skim, jump about, and use clever heuristics to find relevant pieces of text as efficiently as possible.

Kevin Indig also conducted great research looking at how AI models pay attention. They like to retrieve content with lots of entities: specific, concrete references to brands, people, and things. They seem to prioritise text that includes question/answer pairs. They look for strong, confident claims. They weight the first third of a page more strongly than the remaining two-thirds.

Further reading

The only thing missing from the discussion so far is the actual writing. When rubber meets road and virtual pen meets virtual paper, how can we structure the words on the page to maximize our chances of being understood, retrieved, and cited by AI systems?

That’s a writing problem, not a data science problem, and it has solutions that predate LLMs by decades. The writing frameworks below come from military communication, management consulting, and professional journalism. They were designed to help busy humans extract information quickly—which turns out to be exactly the same job AI is trying to do.

Here are four timeless writing frameworks you can use to earn attention from humans and AI search engines alike.

BLUF—Bottom Line Up Front—means stating your conclusion, recommendation, or key finding in the first one or two sentences of any section, then supporting it.

This framework originated in military communication, where commanders need decisions fast. It became standard in management consulting, where partners bill by the hour and clients want answers instantly.

BLUF helps readers understand your key points immediately, right from the first sentence of your introduction or paragraph. It also maps directly to how transformer attention works: models weight content near the top of a section more heavily than content further down.

From Kevin’s research, 44.2% of citations come from the first 30% of content.

Here’s an example of writing without BLUF, often called “burying the lede”:

“There are many factors that influence keyword difficulty, including domain authority, backlink profiles, content quality, and search intent alignment. After analyzing thousands of SERPs, we found that backlinks remain the strongest predictor of ranking success.”

Here’s what BLUF looks like in practice, with the most important idea shared at the beginning of the paragraph:

“Backlinks remain the strongest predictor of ranking success. After analyzing thousands of SERPs, we found they outweigh domain authority, content quality, and intent alignment as ranking factors.”

You can apply the BLUF principle throughout your writing. Your introduction should share your key argument, takeaway, answer, or statistic. The first sentence of each H2 should deliver the section’s main point.

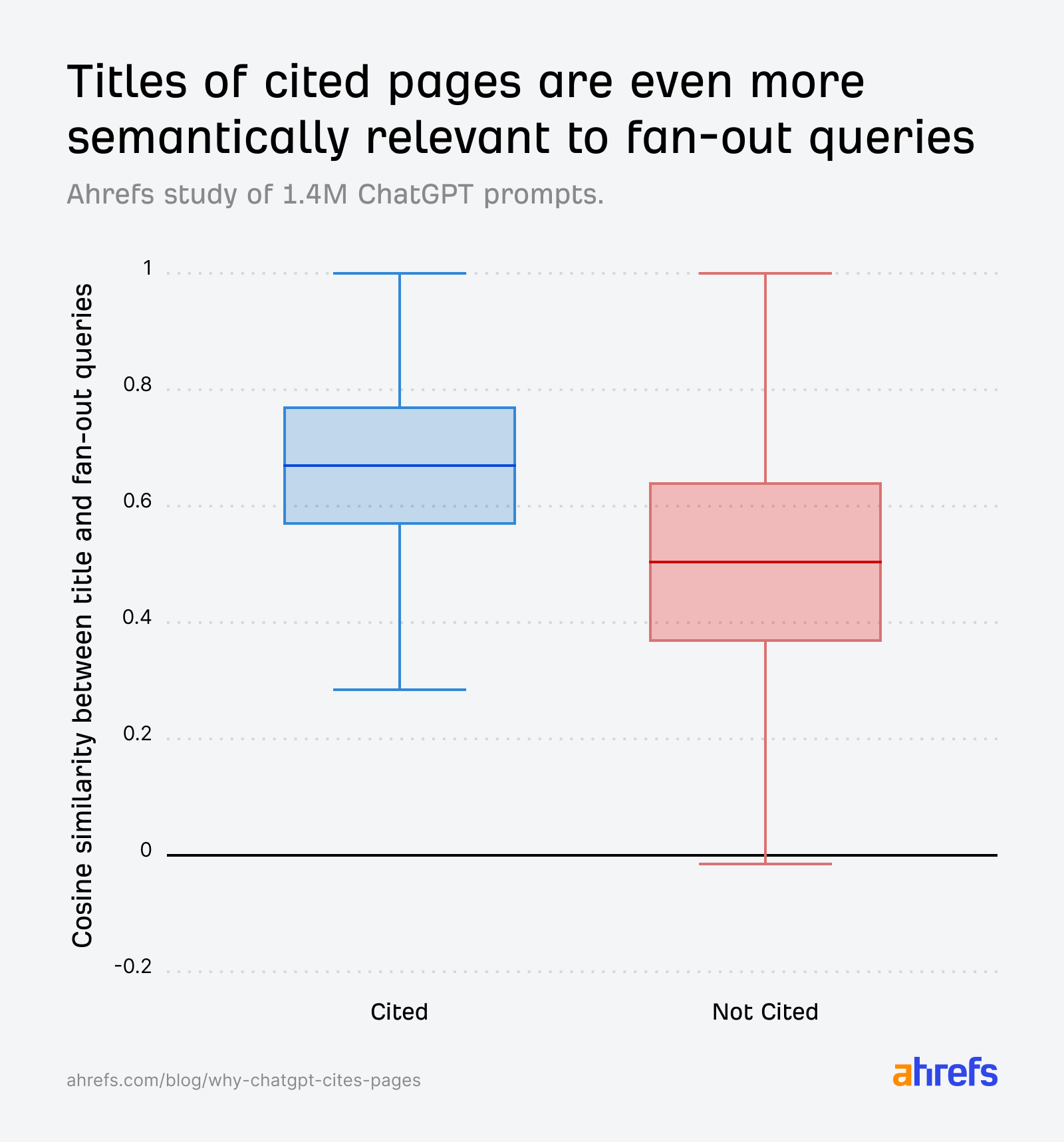

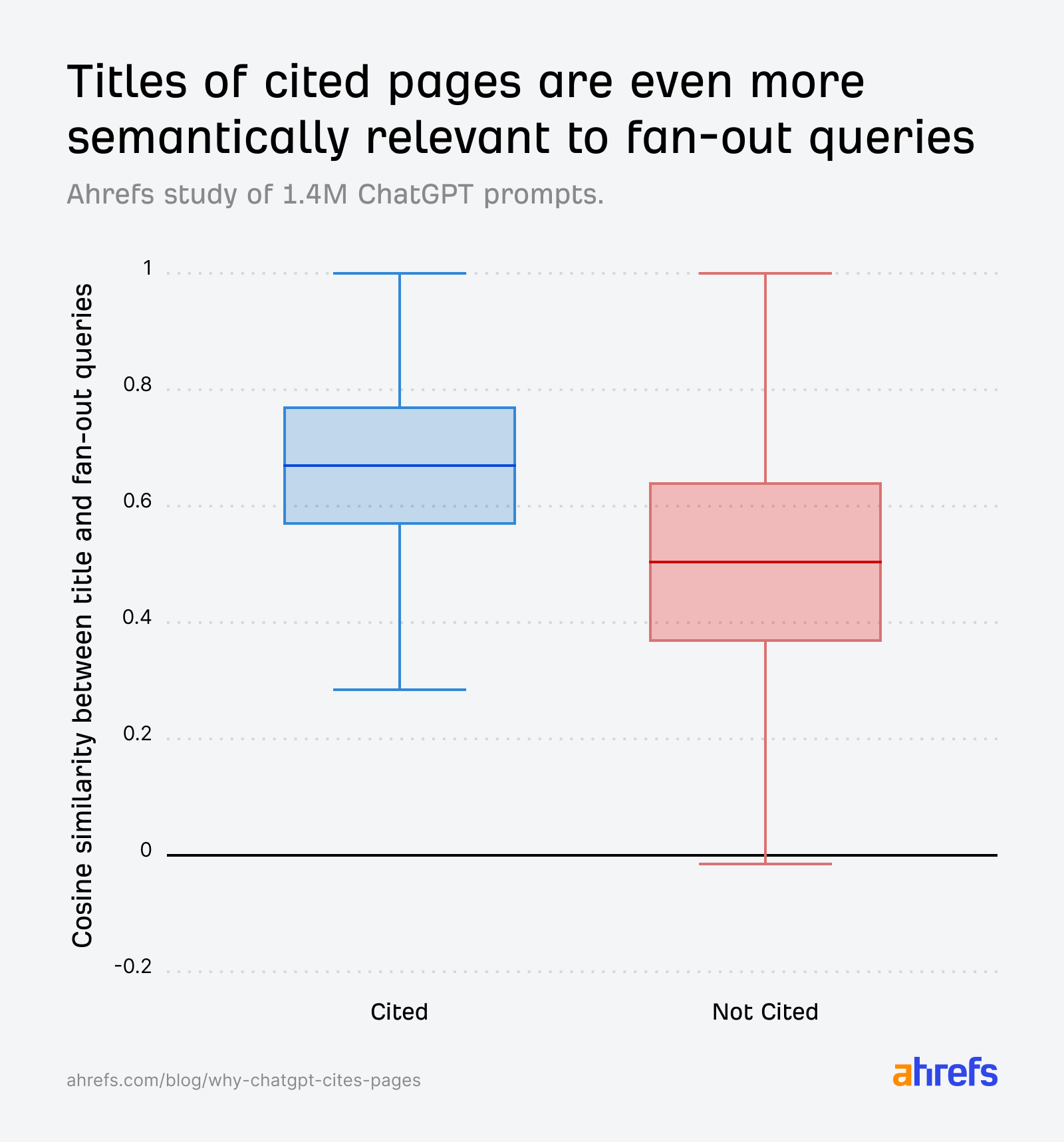

Even your titles can (and probably should) adopt BLUF. Our research found that ChatGPT “prefers” to cite pages with titles that have strong semantic similarity to the fan-out queries they use to search for content.

In other words, the more clearly and directly your page title answers the question ChatGPT wants answered, the greater your chance of being cited.

You can test your content by reading only the first sentence of each section. If a reader can understand your entire argument from those sentences alone, you’ve implemented BLUF correctly.

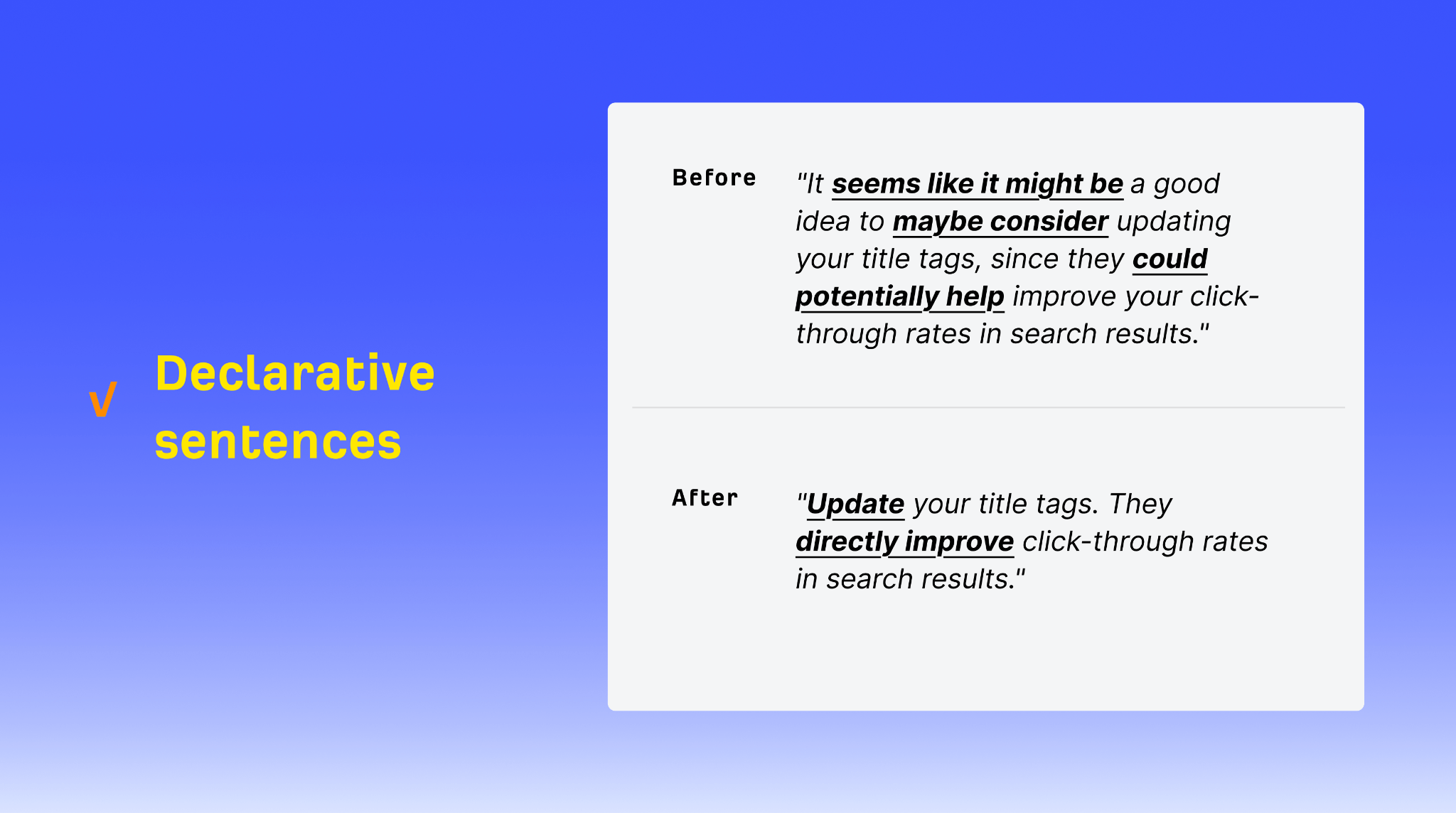

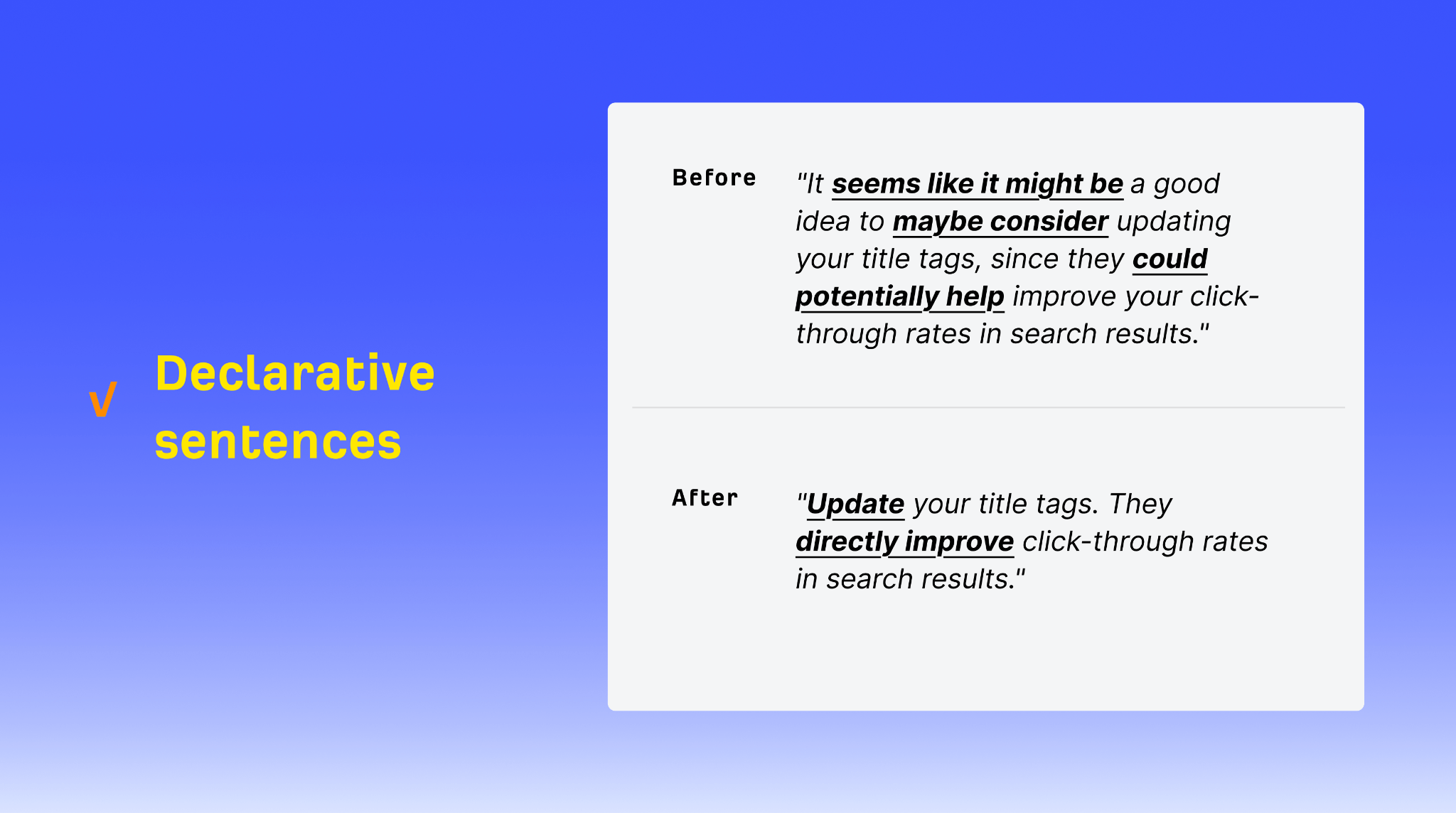

Declarative statements are definitive assertions that can stand alone as answers: “BLUF means stating your conclusion first,” “cited content has 20.6% entity density,” “the Pyramid Principle structures information from general to specific.”

This is probably the single highest-leverage change you can make for AI citation. Kevin’s research found that citation winners are almost 2x more likely (36.2% vs 20.2%) to contain definitive language: phrases like “is defined as,” “refers to,” and “means.”

This isn’t a new concept either. Tools like Steve Toth’s SnippetBrain used the same principles to optimise for featured snippets (remember those?).

Declarative statements help readers because they’re unambiguous. There’s no work required to figure out what you’re saying.

They help AI for a similar reason: AI models are looking to validate and support the claims they make, so they bias towards confident-sounding phrases that quickly and directly answer the query, ideally in a single sentence.

Here’s what a “hedged” sentence looks like, full of indirect, nonconfident language:

“It seems like it might be a good idea to maybe consider updating your title tags, since they could potentially help improve your click-through rates in search results.”

Here’s what declarative writing looks like in practice:

“Update your title tags. They directly improve click-through rates in search results.”

I’ve worked with lots of English literature graduates, and academic writing trains people to hedge. “May,” “might,” “could potentially,” “it’s possible that”—these qualifiers signal intellectual humility in a research context. But in the context of AI citation, they signal uncertainty. If an AI model is choosing between your hedged sentence and a competitor’s confident one, well, yours probably loses.

Not everything should be declarative, of course. Use definitive statements for definitions, established facts, core concepts, and clear recommendations. Use qualified language for emerging research, contested claims, predictions, and edge cases.

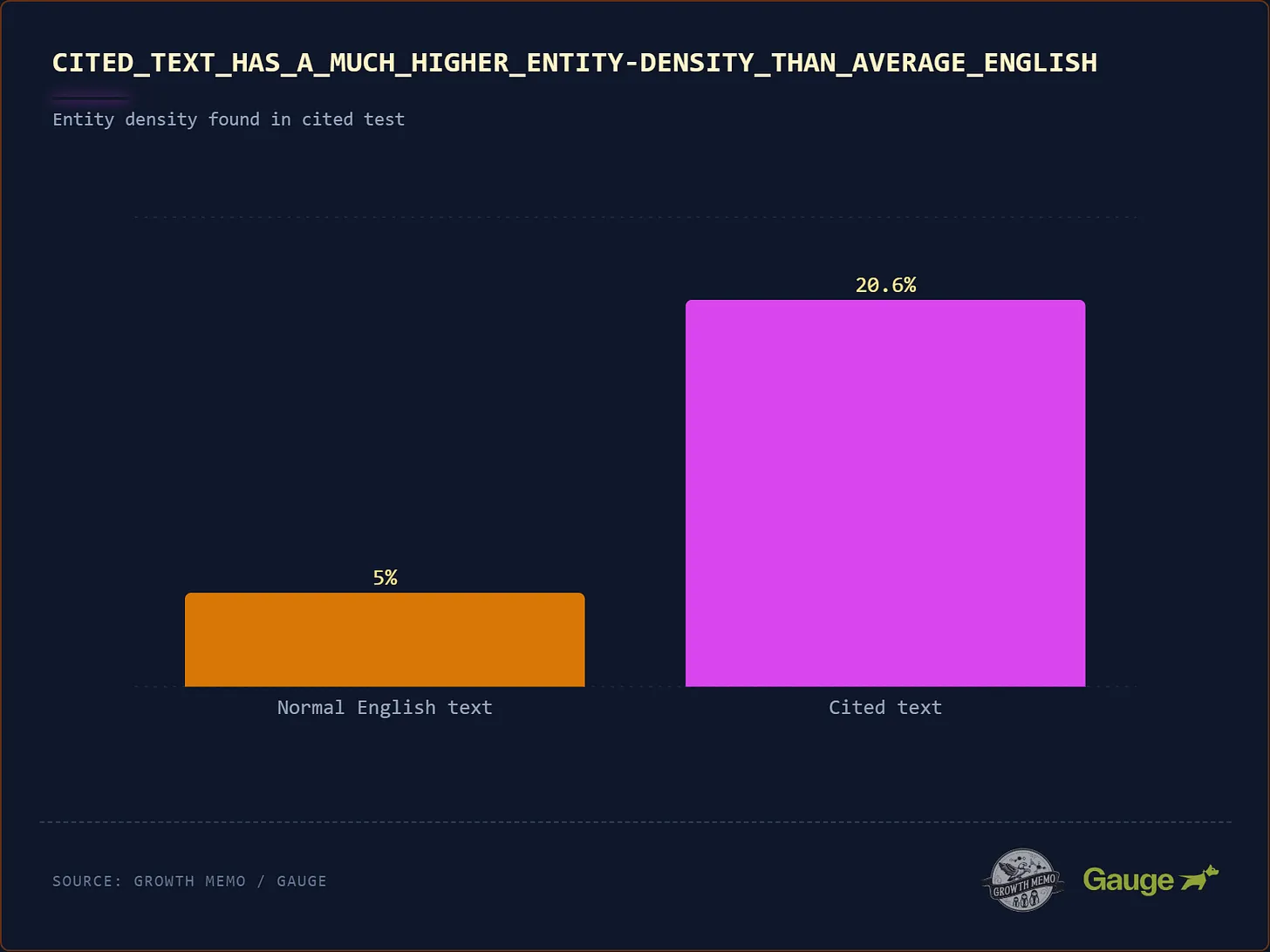

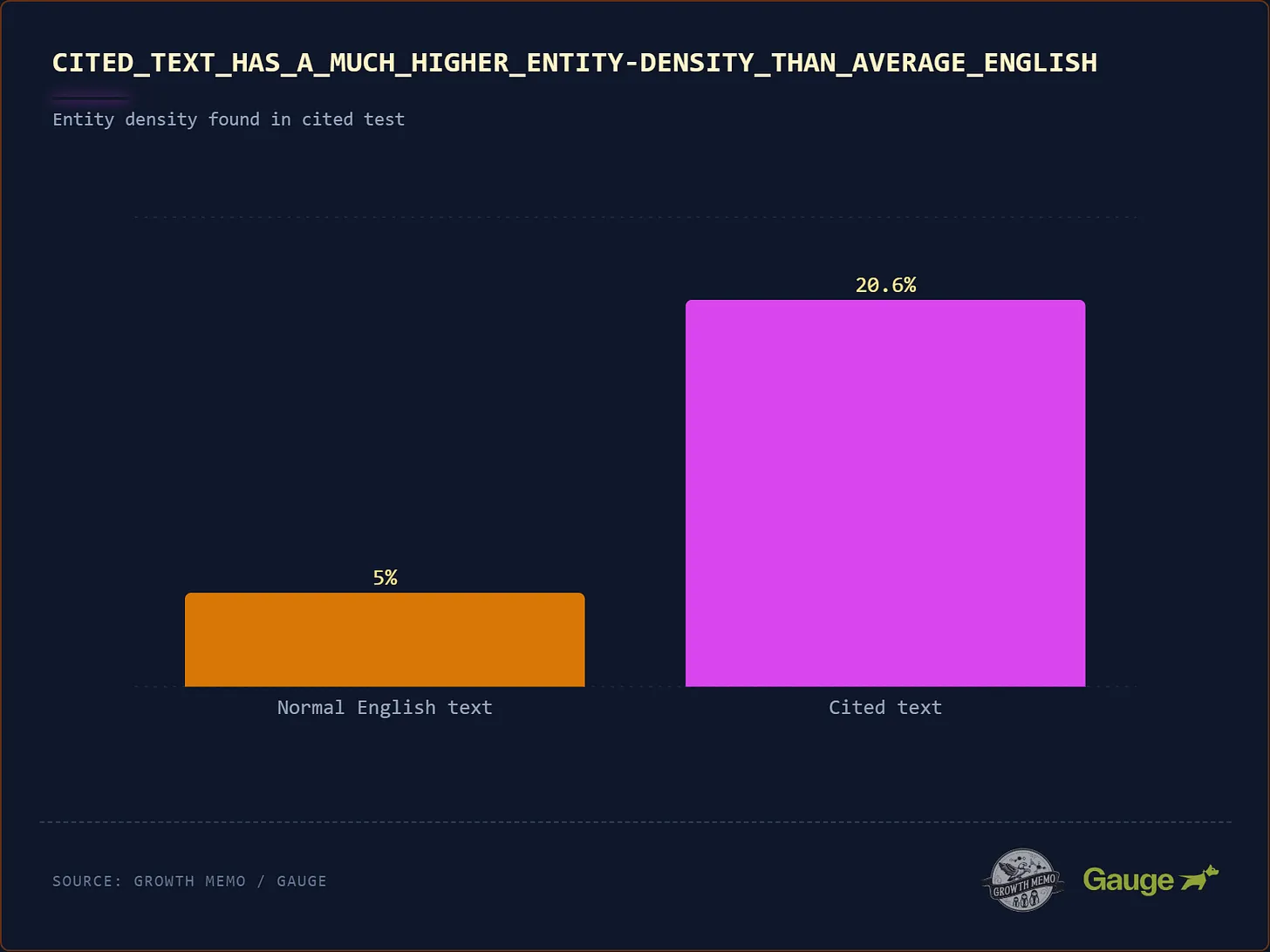

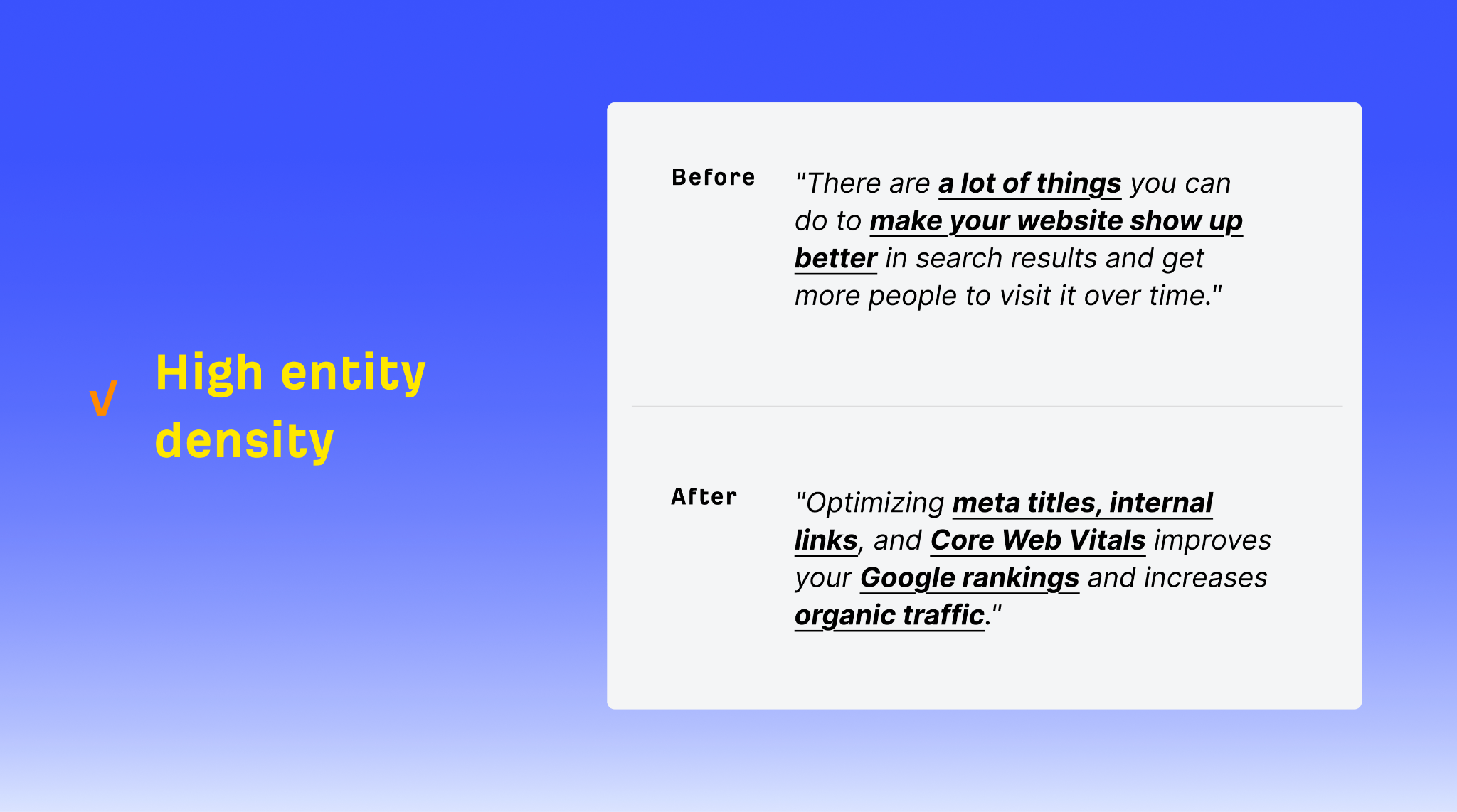

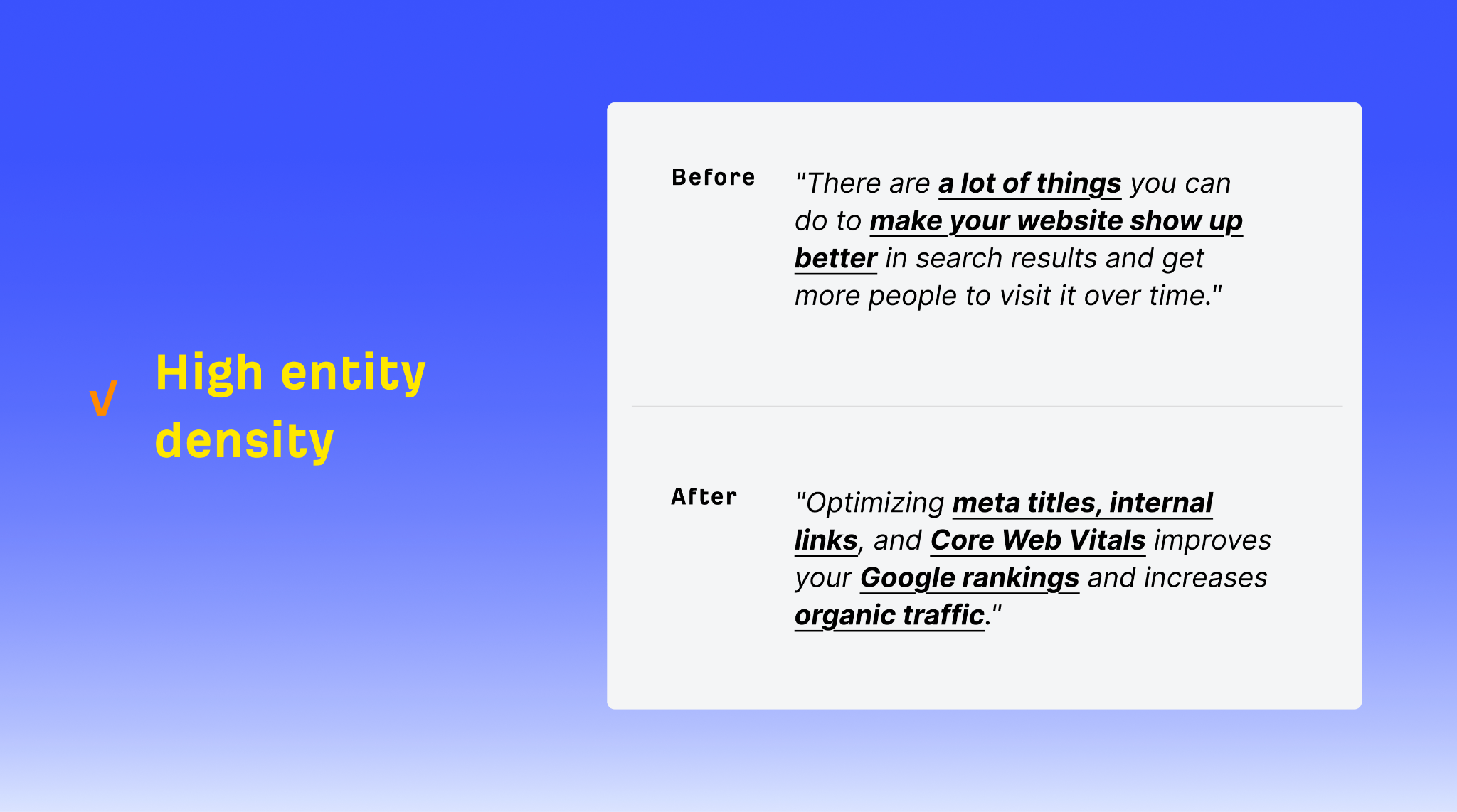

Entity density is the ratio of named entities—brands, tools, people, places, specific concepts—to total words. It seems to be one of the clearest dividing lines between content that gets cited and content that gets ignored.

Kevin’s research found that heavily cited text has an entity density of around 20.6%, compared to 5-8% in standard English prose. That’s 3-4x the normal rate. Generic writing gets overlooked; specific writing gets cited.

Entity density helps AI models “understand” what a piece of text is about. LLMs “think” in terms of entities, and the relationships between them (like Google’s Knowledge Graph). The more entities, the easier it is easier to judge if a piece of content is relevant to a particular query.

The same principle applies to people: the more entities you use in writing, the more specific and detailed you are, and the more useful meaning you convey in your text.

Here’s an example of low entity density, using vague and non-specific words:

“There are a lot of things you can do to make your website show up better in search results and get more people to visit it over time.”

Here’s an example of high entity density, mentioning many specific, concrete concepts and things:

“Optimizing meta titles, internal links, and Core Web Vitals improves your Google rankings and increases organic traffic.”

The rule for writing is simple: name the tool, cite the source, specify the metric. Every time you catch yourself writing something vague and jargony —“use a tool,” “check a metric,” “according to research”—replace it with a concrete, specific example.

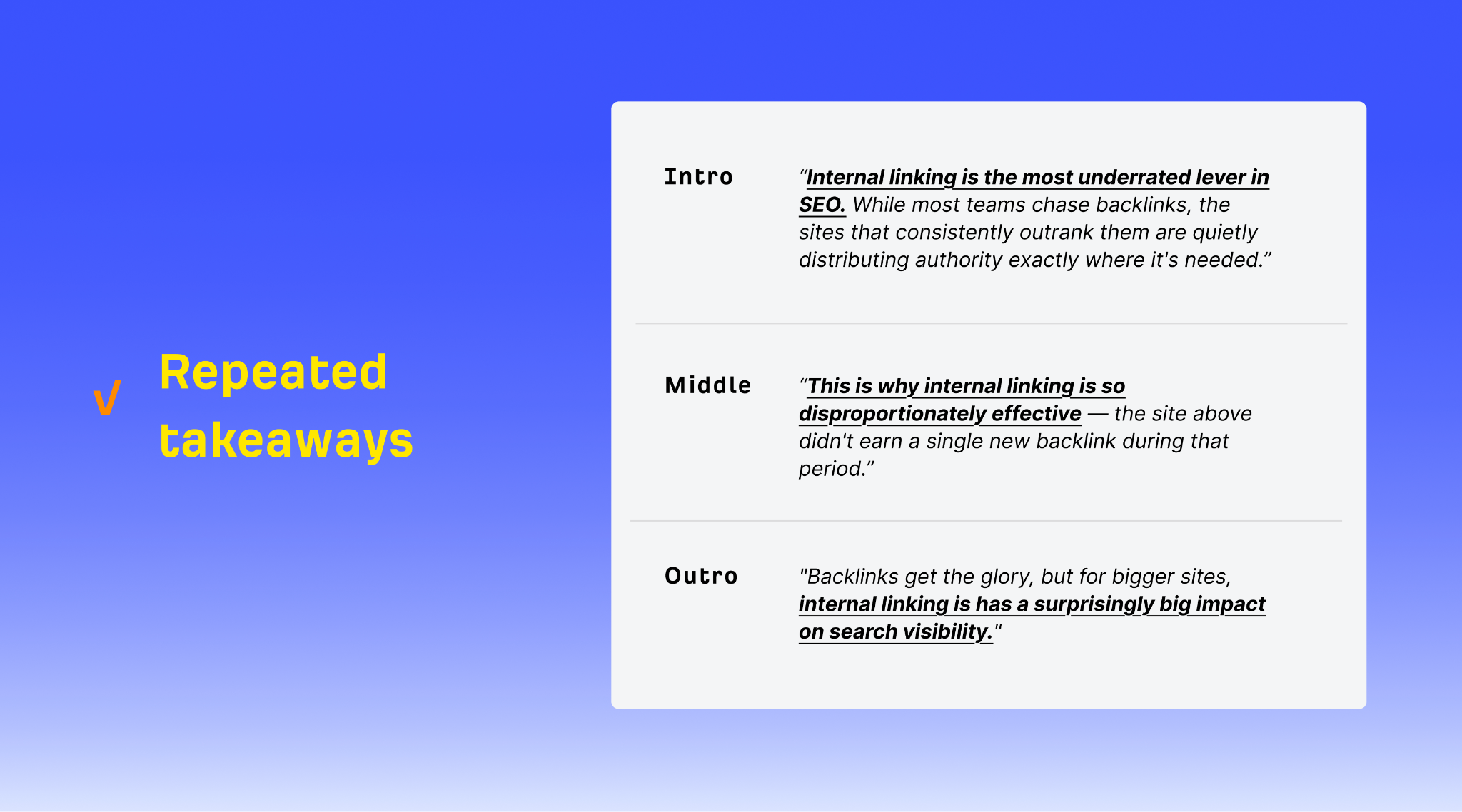

Strategic repetition means placing your most important ideas at multiple points in your content, rephrased for each context.

Repetition helps readers because people don’t consume content linearly. They arrive via search, via internal links, via scrolling past your first three sections. A reader who jumps to section five hasn’t seen your introduction. Working memory is limited, and spaced repetition increases retention. If your most important point only appears once, most of your readers will miss it.

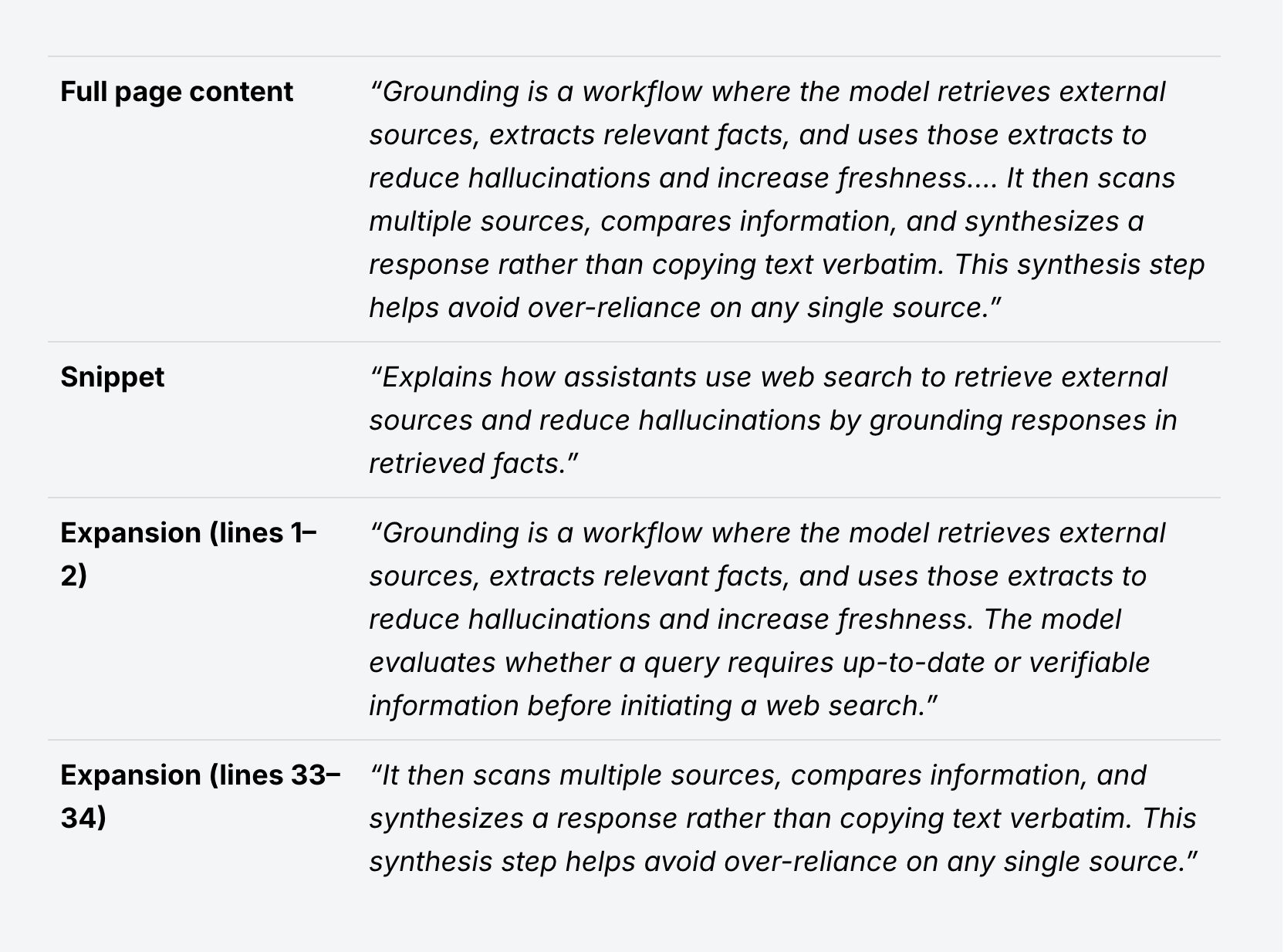

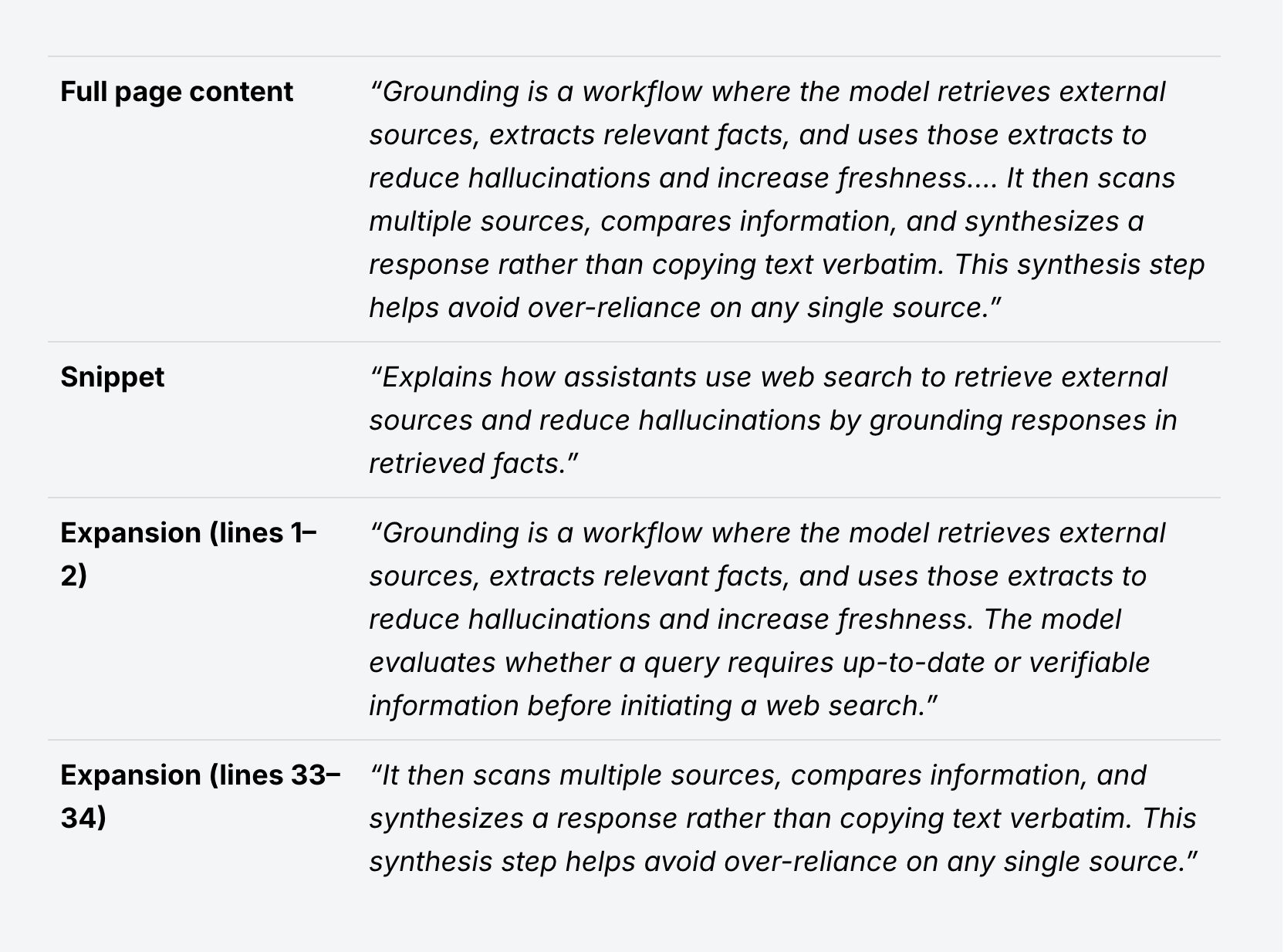

Repetition helps AI for a more mechanical reason: models don’t read your entire page. As Dan Petrovic showed, they instead retrieve snippets—short passages that match a query.

If your key insight appears only in one paragraph and the model retrieves a different snippet, that insight is invisible. Repeating it in different places, with different phrasing, creates multiple extraction opportunities. Each rephrased version also matches slightly different query fan-out formulations, increasing the odds that one of them aligns with whatever fan-out query ChatGPT is running.

Think of it this way: if AI only samples 30% of your content, repetition increases the likelihood that any given sample contains your most important point.

It makes sense to mention key ideas in your introduction…

“Internal linking is the most underrated lever in SEO. While most teams chase backlinks, the sites that consistently outrank them are quietly distributing asuthority exactly where it’s needed.”

…to repeat them mid-article, as a contextual reminder…

“This is why internal linking is so disproportionatelty effective—the site above didn’t earn a single backlink during that period.”

…and finally reinforce your point with a summary in the conclusion:

“Backlinks get the glory, but for bigger sites, internal linking has a surprisingly big impact on search visibility.”

Each version reframes the same core idea, adding extra context with each repetition—and increasing the likelihood that people and machines will find and understand it.

The data suggests that these simple writing frameworks are beneficial for both human readers and AI search engines, hunting for content to retrieve and cite.

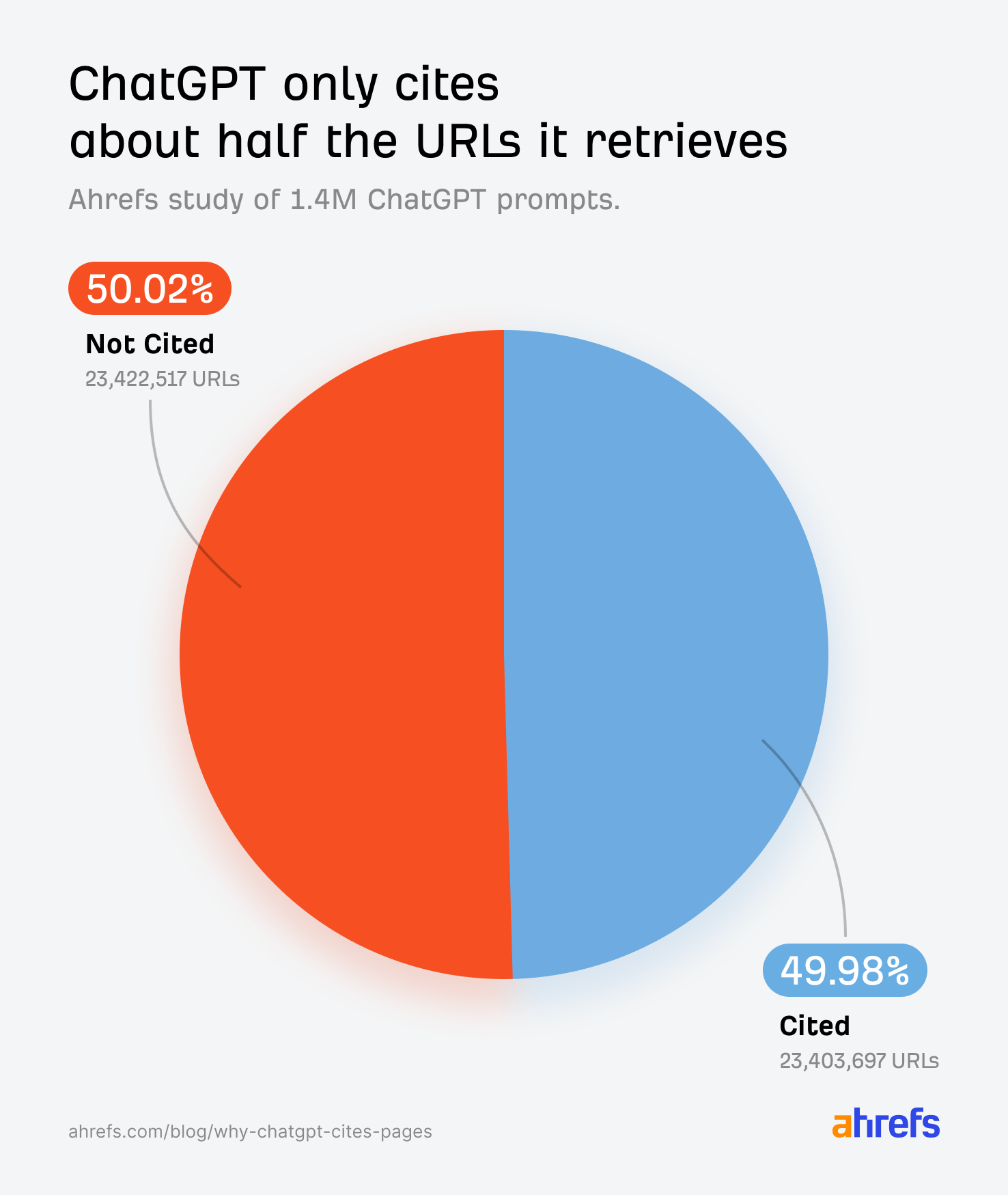

But crucially, these are not magix fixes: your content needs to rank. Our study of 1.4 million ChatGPT prompts found that 88% of cited URLs come from ChatGPT’s general search index. If you’re not showing up in search results, on-page writing quality is a moot point.

But once you are ranking, how you write determines whether you get cited or ignored—ChatGPT only cites about half the URLs it retrieves. That gap is what these frameworks are designed to close.

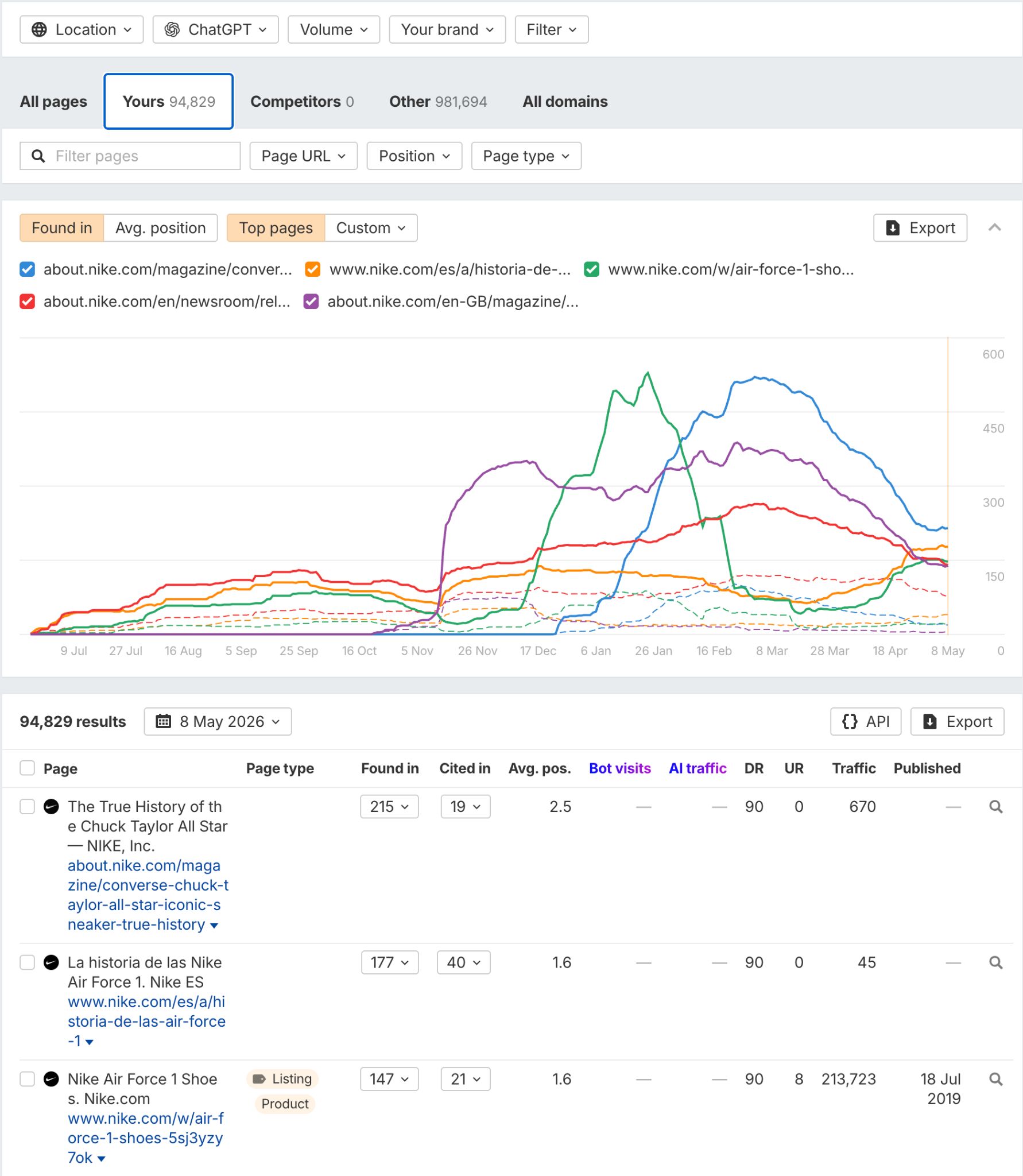

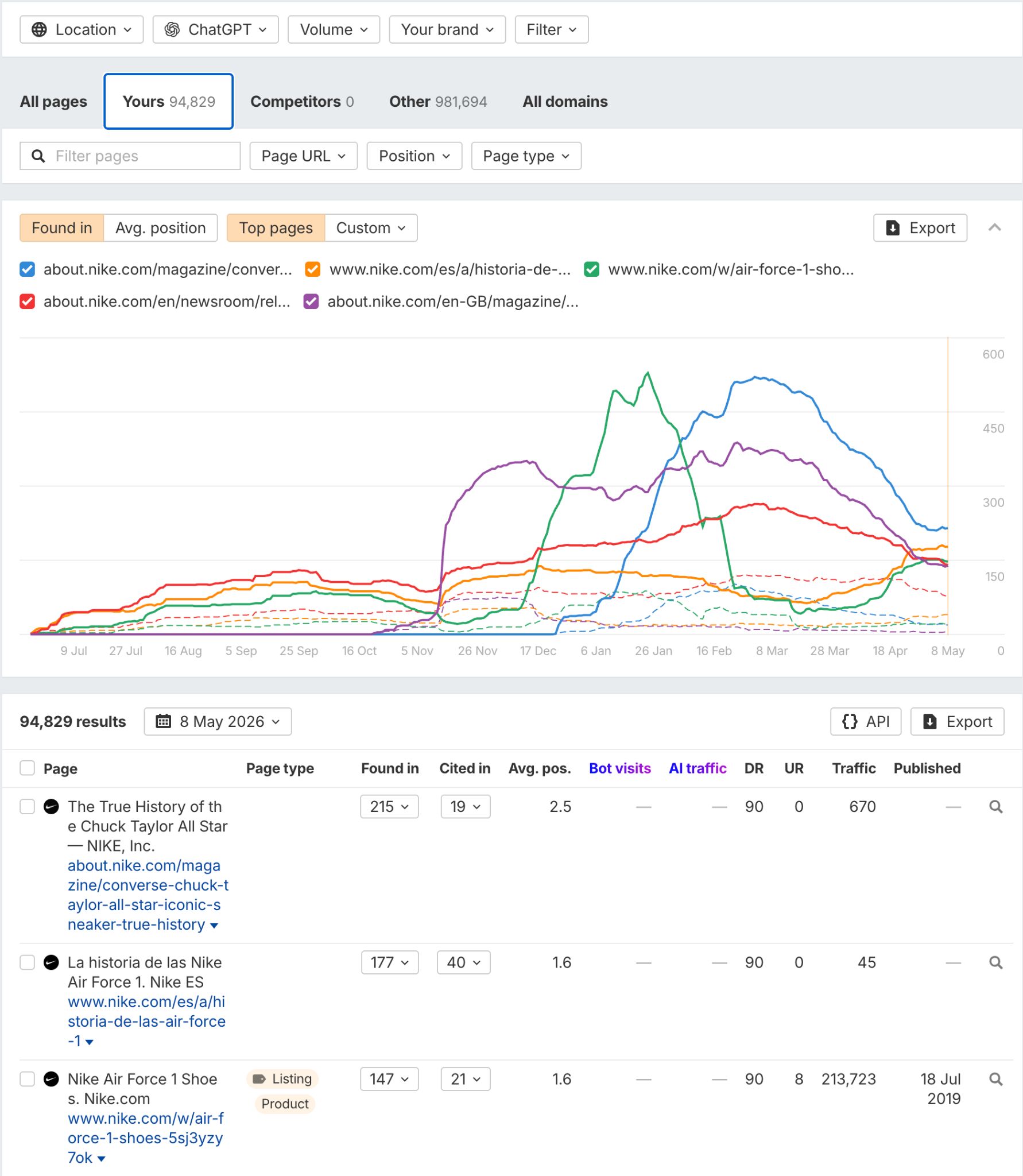

You can use Ahrefs Brand Radar to see which AI platforms cite you—ChatGPT, Perplexity, Claude, Google AI Overviews, AI Mode, Grok—and which specific URLs get quoted. Just enter your brand and domain, head to the Cited pages report, and click the Yours tab to see your most-cited URLs (and how their citations change over time):

![[Aggregator] Downloaded image for imported item #1413859](https://www.sme-insights.co.uk/wp-content/uploads/2026/05/word-image-196828-1-1068x600.jpg)